Do you have a laptop with 8 GB of RAM and want to run AI locally?

This article is a breakdown: what works, what barely runs,

and what's not even worth downloading. No illusions, with specific models

and commands for each task. If you're not yet familiar with Ollama —

start with the introductory article on what Ollama is and why you need it.

📚 Table of Contents

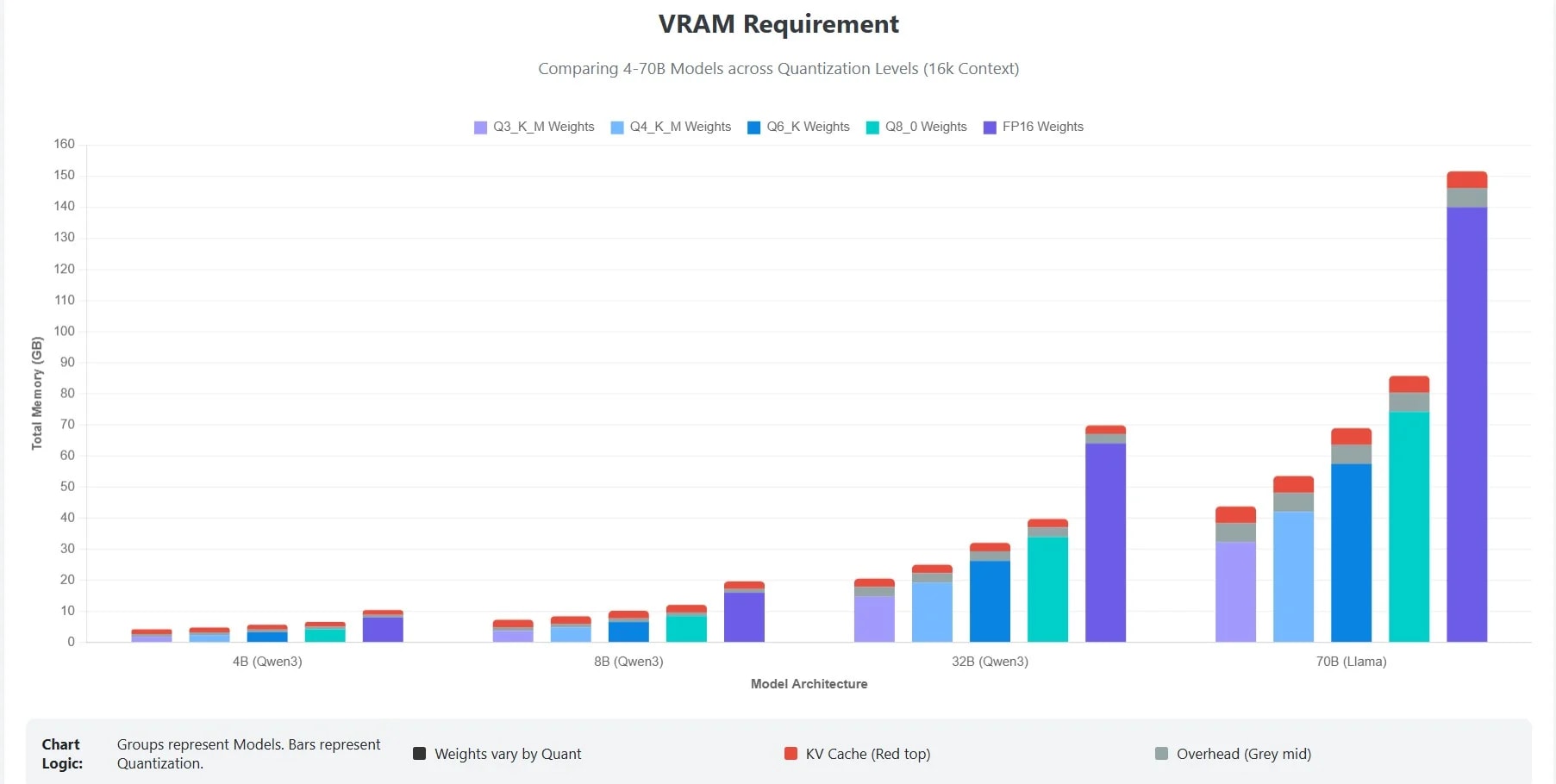

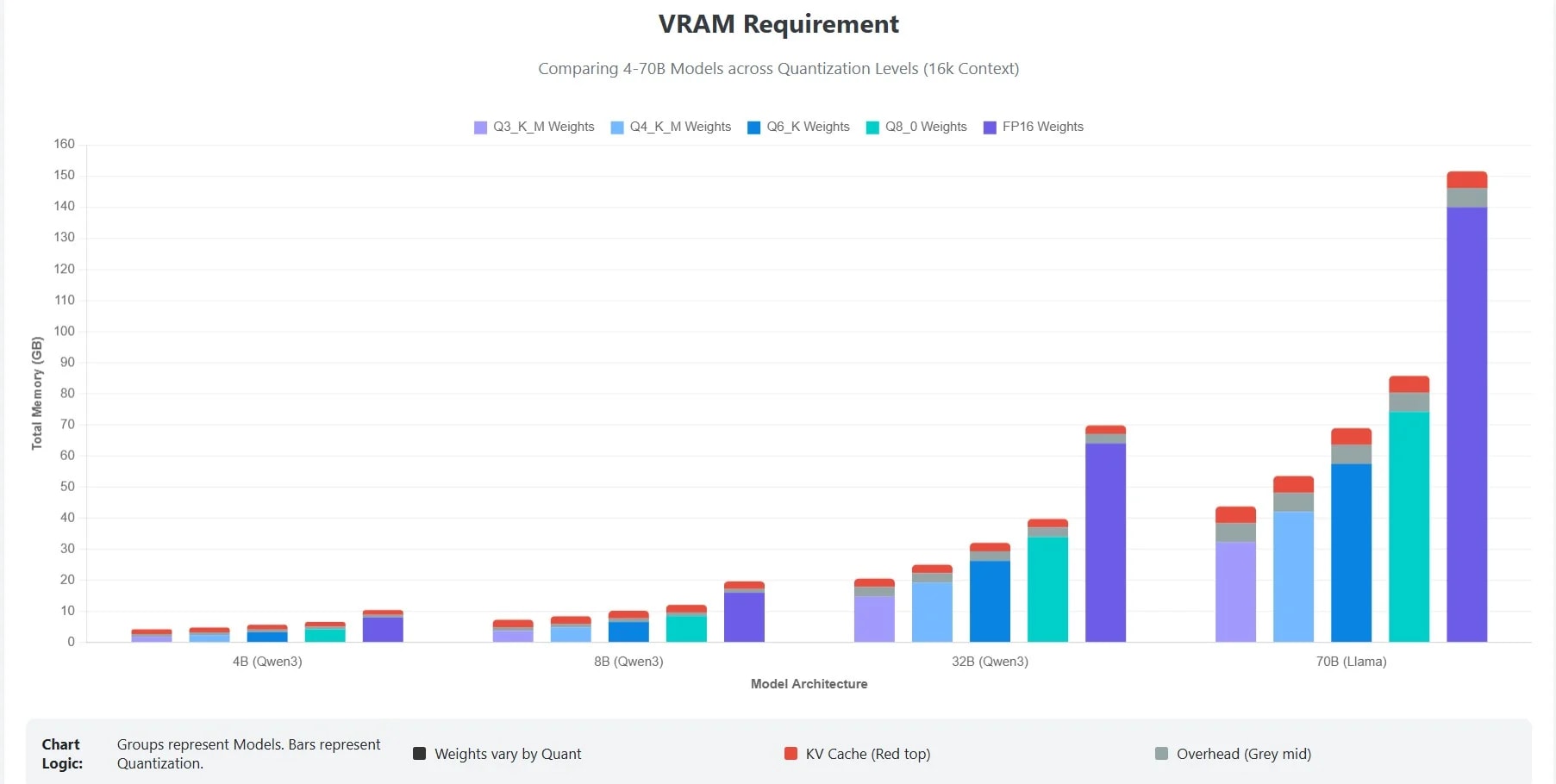

🎯 Honest arithmetic: how much RAM is actually left for the model

Short answer:

From 8 GB of RAM, 4–5 GB are actually available for the AI model.

The rest is taken by the operating system, browser, and basic processes.

This defines the main rule: on 8 GB, models up to 3–7B

parameters in 4-bit quantization work comfortably.

8 GB RAM is not 8 GB for the model.

It's 8 GB minus the OS, minus Chrome, minus everything you forgot to close.

Before choosing a model, you need to understand the actual memory budget.

Here's a typical distribution on a system with 8 GB RAM:

- ✔️ Operating system: 1.5–2.5 GB (macOS closer to 2.5, Windows — 2, Linux — 1.5)

- ✔️ Browser (5–10 tabs): 1–2 GB

- ✔️ IDE (VS Code / IntelliJ): 0.5–1.5 GB

- ✔️ Background processes: 0.3–0.5 GB

Remaining for the model: 3–5 GB.

According to

LocalLLM.in,

a 7B parameter model in Q4_K_M quantization takes approximately 4–5 GB,

plus 1–2 GB for KV-cache and system overhead.

This means: a 7B model on 8 GB is possible, but at the limit, and it's better to close

all unnecessary applications.

Practical rule for 8 GB:

- ✔️ Comfort zone: 1–3B parameter models (Q4_K_M) — leaves space for IDE and browser

- ✔️ Work zone: 7–8B parameter models (Q4_K_M) — need to close all unnecessary applications

- ❌ Red zone: 13B+ models — guaranteed freezes or swap to disk

Conclusion: Before choosing a model, close your browser, check

ollama ps and see the actual remaining memory.

On 8 GB, every gigabyte is worth its weight in gold.

🎯 For code: which model will replace Copilot on 8 GB

Code Autocompletion

For code autocompletion on 8 GB, the best choice is Qwen 2.5 Coder 3B or

Phi-4 Mini (3.8B) in Q4_K_M quantization. Both models leave enough

memory for VS Code and provide acceptable generation quality.

GitHub Copilot costs $10/month. A local code model is

$0/month and works offline. The only question is which model

will run on your hardware.

Coding is a task where even small models can be useful.

Autocompletion, function generation, code explanation, test writing —

this doesn't require GPT-4, it requires a fast and accurate model

that understands syntax.

Top models for code on 8 GB

1. Qwen 2.5 Coder 3B (Q4_K_M) — ~2.2 GB RAM

According to

SitePoint,

Qwen leads on the HumanEval benchmark among 7–8B class models.

The 3B version is lighter but retains a strong specialization in code.

Trained on a large volume of programming code and technical documentation.

ollama pull qwen2.5-coder:3b

ollama run qwen2.5-coder:3b "Write a Python array sorting function"

2. Phi-4 Mini (3.8B) — ~2.3 GB RAM

According to

SitePoint,

Phi-4 Mini is the only model that works comfortably on 8 GB systems,

delivering 15–20 tokens/sec on an M1 MacBook Air or a budget Linux laptop.

It handles autocompletion, simple explanations, and light chat tasks well.

ollama pull phi4-mini

ollama run phi4-mini "Explain the difference between HashMap and TreeMap in Java"

3. DeepSeek Coder 1.3B (Q4_K_M) — ~1 GB RAM

The lightest model for code. Ideal for autocompletion in IDEs —

fast, doesn't overload the system, can be kept running in the background along

with VS Code, browser, and terminal.

ollama pull deepseek-coder:1.3b

ollama run deepseek-coder:1.3b

What to choose?

- ✔️ Need autocompletion in the background + open browser → DeepSeek Coder 1.3B

- ✔️ Need function generation and code explanation → Qwen 2.5 Coder 3B

- ✔️ Need a universal model for code and text → Phi-4 Mini

More details on autocompletion setup —

in the article Ollama + VS Code: a free alternative to GitHub Copilot.

Conclusion: On 8 GB, you can code with local AI.

Don't expect GPT-4 quality — but for daily autocompletion, boilerplate generation,

and code explanations, this is sufficient.

🎯 For text and communication: chat, summaries, translation

For text tasks

For text tasks on 8 GB, the optimal choice is Llama 3.2 3B for general

chat, Gemma 2B for maximum speed, or Phi-3 Mini for a balance

of quality and size. All three leave room for other software.

Not every task requires GPT-4. Summarizing text,

answering questions, rephrasing an article — a model that weighs less than one 4K movie

can handle this.

Text tasks are the broadest category: from simple chat to document analysis

and translation. On 8 GB, there's plenty to choose from here.

Top models for text on 8 GB

1. Llama 3.2 3B (Q4_K_M) — ~2 GB RAM

According to

StudyHUB,

Llama 3.1/3.2 is the most popular model on Ollama with over 111 million

downloads. The 3B version is lighter but maintains quality in general

conversations, summarization, and question answering. Supports 8 languages.

ollama pull llama3.2:3b

ollama run llama3.2:3b "Summarize the main idea of this text: ..."

2. Gemma 2B (Q4_K_M) — ~1.6 GB RAM

A model from Google DeepMind. The fastest in this category — ideal

when instant response and minimal system load are needed.

Quality is lower than Llama 3B, but for simple questions and text classification

— it's more than enough.

ollama pull gemma:2b

ollama run gemma:2b "Write a short description for this product: ..."

3. Phi-3 Mini (3.8B) — ~2.3 GB RAM

According to

StudyHUB,

Phi-3 Mini, at 2.3 GB, covers 90% of daily tasks.

Works fast even on CPU and is suitable for Raspberry Pi 4/5.

ollama pull phi3:mini

ollama run phi3:mini "Translate to Ukrainian: The quick brown fox jumps over the lazy dog"

What to choose?

- ✔️ General chat and Q&A → Llama 3.2 3B

- ✔️ Maximum speed, minimum RAM → Gemma 2B

- ✔️ Balance of quality and size, daily use → Phi-3 Mini

If you want a beautiful graphical interface instead of the terminal —

see the article Ollama + Open WebUI: local ChatGPT in your browser.

Conclusion: For text tasks, 8 GB is comfortable

territory. 2–3B models work fast, leave space for other

programs, and provide sufficient quality for most daily needs.

🎯 For reasoning: math, logic, code debugging

For tasks requiring step-by-step thinking — math, logic problems,

debugging complex code — DeepSeek R1 8B in Q4 quantization works on 8 GB.

This is a "thinking" model: it's slower but more accurate on complex questions.

A regular model answers immediately. A reasoning model

first thinks — step by step — and then answers.

Like the difference between "answering randomly" and "calculating on paper."

Reasoning models are a relatively new category. They work on the principle

of chain-of-thought: breaking down a task into steps, checking intermediate

results, and only then forming the final answer.

What works on 8 GB

1. DeepSeek R1 8B (Q4_K_M) — ~5 GB RAM

According to

StudyHUB,

DeepSeek R1 is a "thinking" model, an analogue of OpenAI o1. For tasks involving math,

logical puzzles, and technical reasoning, it provides better results than Llama 3.1

of the same size. The compromise: it responds slower because it "thinks" before answering.

ollama pull deepseek-r1:8b

ollama run deepseek-r1:8b "Find the error in this SQL query: SELECT * FROM users WHERE id = '5' AND active = true GROUP HAVING count > 1"

⚠️ Important: DeepSeek R1 8B takes ~5 GB RAM.

On an 8 GB system, this is at the limit — you need to close the browser, IDE, and everything

unnecessary. On macOS with unified memory, it works more stably than on Windows

with integrated graphics.

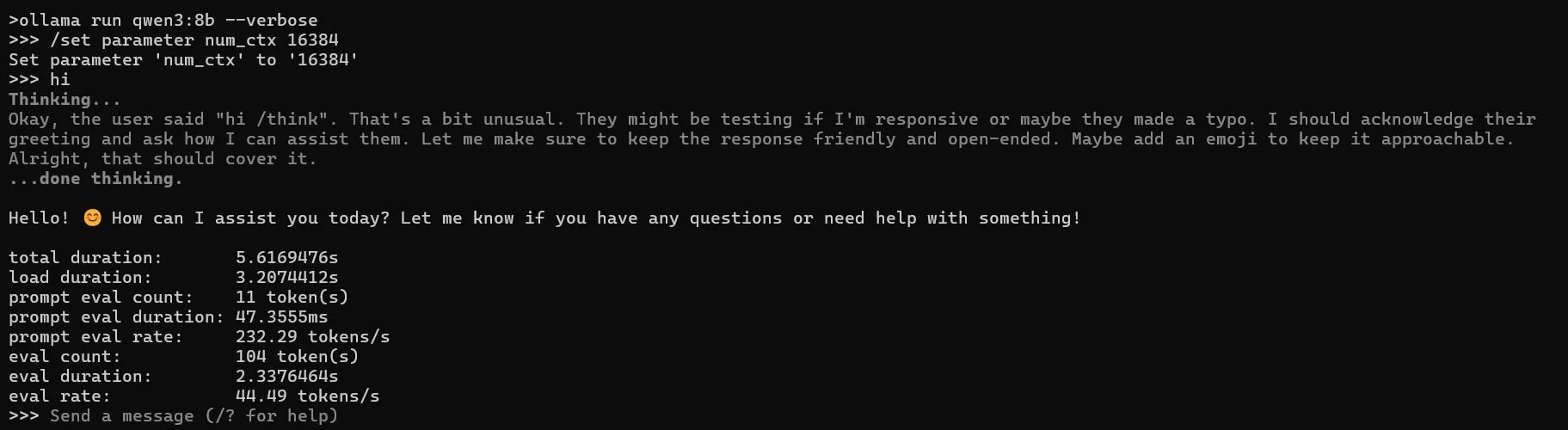

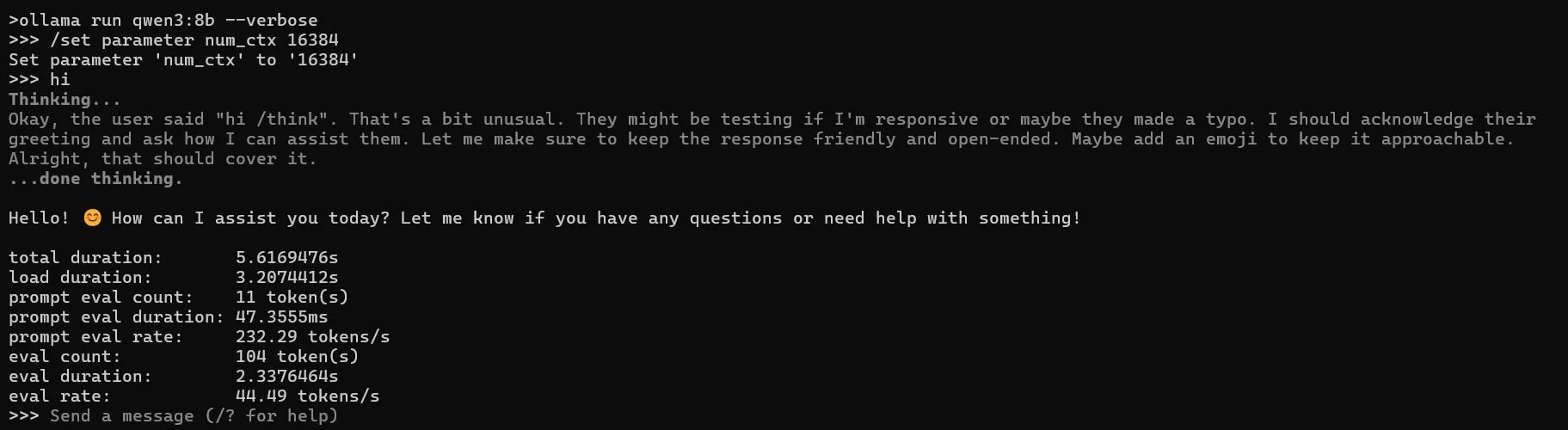

2. Qwen 3 8B (Q4_K_M) — ~5 GB RAM

According to

LocalLLM.in,

Qwen 3 8B is a strong alternative for reasoning tasks, especially in mathematics

and multilingual scenarios. Supports thinking mode in Ollama by default.

ollama pull qwen3:8b

ollama run qwen3:8b "Solve: if 3x + 7 = 22, what is x?"

What to choose?

- ✔️ Code debugging and logical tasks → DeepSeek R1 8B

- ✔️ Math and multilingual reasoning → Qwen 3 8B

- ✔️ If 8B doesn't fit — Phi-4 Mini as a compromise (smaller, but without chain-of-thought)

Conclusion: Reasoning on 8 GB is possible, but it's the limit

of comfort. 8B models require almost all available memory. For regular

work with such tasks, it's worth considering an upgrade to 16 GB —

the difference in capabilities will be significant.

🎯 CPU vs GPU vs Apple Silicon — where 8 GB means different 8 GB

8 GB on a Mac M1 and 8 GB on a Windows laptop with Intel are two different experiences.

Apple Silicon uses unified memory, where all memory is available to both CPU

and GPU simultaneously. On a regular PC, RAM and VRAM are separate pools,

and for AI models, this is critical.

Mac M1 with 8 GB is a full-fledged workstation for local AI.

Windows laptop with 8 GB and Intel HD Graphics is a struggle for every megabyte.

Apple Silicon (M1/M2/M3) — the best scenario for 8 GB

On Apple Silicon, all RAM is unified memory.

This means that the GPU part of the chip has access to the same 8 GB

as the CPU. Ollama automatically uses Metal for acceleration —

without additional settings.

Result: a 7B model in Q4_K_M on an M1 with 8 GB delivers 15–20 tokens/sec —

enough for comfortable interactive use.

According to

SitePoint,

Phi-4 Mini on an M1 MacBook Air is approximately 15–20 tok/s, which is sufficient

for daily work.

Windows / Linux with discrete GPU (RTX 3060, RTX 4060) — good scenario

If there is a discrete graphics card with 6–8 GB VRAM — the model is fully

loaded into GPU memory, and system RAM remains for the OS and software.

According to

LocalLLM.in,

on an RTX 4060 (8 GB VRAM), a 7B model delivers 40+ tokens/sec —

the fastest option of all.

Windows / Linux without GPU (Intel HD / AMD Radeon iGPU) — difficult scenario

Without a discrete GPU, the model runs entirely on the CPU. Ollama will still

start — but the speed drops to 3–6 tokens/sec.

According to

LocalLLM.in,

CPU-only inference is acceptable for batch tasks, but frustrating

for interactive use.

Plus, system RAM is shared between the OS, software, and the model — on 8 GB

this is very tight.

Summary table

| Platform |

7B model (Q4) |

3B model (Q4) |

Speed |

Comfort |

| Mac M1/M2 8 GB |

✔️ Works |

✔️ Comfortable |

15–20 tok/s |

⭐⭐⭐⭐ |

| Windows + RTX 4060 8 GB VRAM |

✔️ Works fast |

✔️ Comfortable |

40+ tok/s |

⭐⭐⭐⭐⭐ |

| Windows/Linux CPU only 8 GB |

⚠️ At the limit |

✔️ Works |

3–6 tok/s |

⭐⭐ |

Conclusion: If you have a Mac M1+ with 8 GB — you are in the

best position for local AI on budget hardware. If Windows

without a GPU — focus on 3B models and close all unnecessary applications.

More details on installation on different OSs —

in the article How to install Ollama on Mac, Windows, and Linux.

🎯 Quantization in simple terms: Q4 vs Q8 and what to choose on weak hardware

Short answer:

Quantization is model compression that reduces its size by 2–4 times

with minimal quality loss. On 8 GB, the optimal choice is Q4_K_M:

the best balance between size, speed, and response quality.

Quantization is like JPEG for photos. The file is smaller,

the difference is almost imperceptible. But if you compress too much —

the quality will noticeably drop.

When you see tags like :7b-q4_0,

:8b-instruct-q8_0 or :3b-q4_k_m in the model name on Ollama — these denote

the quantization level. The number after "q" is the number of bits per parameter.

Quantization levels: what the tags mean

- ✔️ Q8 (8-bit): maximum quality, largest size. For a 7B model — ~8 GB. Won't fit on 8 GB RAM.

- ✔️ Q4_K_M (4-bit, K-quant medium): optimal balance. For 7B — ~4–5 GB. Recommended for 8 GB systems.

- ✔️ Q4_K_S (4-bit, K-quant small): slightly smaller than Q4_K_M, slightly lower quality.

- ⚠️ Q2_K (2-bit): minimal size (~2.5 GB for 7B), but noticeable quality degradation. Extreme option.

The suffix "K" denotes newer quantization methods (K-quant), which more intelligently

distribute precision among model layers. K-quant tags are always better than

legacy options (q4_0, q4_1) for the same size.

How much models of different quantizations weigh

| Model |

Q8 |

Q4_K_M |

Q2_K |

| Phi-3 Mini (3.8B) |

4.0 GB |

2.3 GB |

1.2 GB |

| Llama 3.2 (7B) |

~8 GB |

~4.5 GB |

~2.6 GB |

| Mistral 7B |

~8 GB |

~4.1 GB |

~2.8 GB |

Data from

LocalAIMaster.

Rule for 8 GB: always choose Q4_K_M. If it doesn't fit — reduce

the model size (3B instead of 7B), not the quantization level (Q2 instead of Q4).

A smaller model with Q4 will give better quality than a larger one with Q2.

More details on compression techniques and their impact on quality —

in the article Model Quantization: INT4, INT8 — what it is and how it affects quality.

Conclusion: Q4_K_M is the gold standard for 8 GB. Don't be tempted

to download Q8 "for quality" — the model won't fit in memory,

and you'll get swap to disk instead of fast responses.

🎯 Optimizing Ollama for maximum performance on weak hardware

Three environment variables and one habit (closing unnecessary applications) — that's all

you need to squeeze the maximum out of 8 GB. Setup takes

a minute, and the difference in stability is noticeable.

On powerful hardware, Ollama "just works."

On weak hardware — you need to help it not waste memory on what

you don't need.

By default, Ollama can keep several models in memory

simultaneously and process parallel requests. On 8 GB, this is an unnecessary luxury.

Here's a minimal set of optimizations:

Environment variables

# Keep only one model in memory (by default there can be more)

export OLLAMA_MAX_LOADED_MODELS=1

# One parallel request (no memory contention)

export OLLAMA_NUM_PARALLEL=1

# Reduce context window — saves 200–800 MB RAM

export OLLAMA_CTX_SIZE=2048

On macOS / Linux, add these lines to ~/.zshrc or

~/.bashrc. On Windows — set via system environment

variables or PowerShell profile.

Before running the model

Sounds trivial, but on 8 GB it's critical:

- ✔️ Close your browser or leave a maximum of 2–3 tabs

- ✔️ Close Slack, Discord, Spotify — each program consumes 200–500 MB

- ✔️ Check current usage:

ollama ps will show loaded models

- ✔️ If an old model is still in memory —

ollama stop model_name

Modelfile for fine-tuning

If you want more control — create a Modelfile with optimized parameters:

FROM phi3:mini

PARAMETER num_ctx 2048

PARAMETER num_thread 4

PARAMETER temperature 0.7

num_ctx 2048 — reduces the context window (less RAM for KV-cache).

num_thread 4 — limits the number of CPU threads so the system

remains responsive.

Step-by-step guide for installation and first run —

in the article How to install Ollama on Mac, Windows, and Linux: a complete guide 2026.

And about creating custom models via Modelfile —

in the article Modelfile in Ollama: create your custom AI.

Conclusion: Three environment variables + closed unnecessary programs =

stable operation on 8 GB. Without these settings, even a light model

can cause swap to disk.

🎯 What NOT to try on 8 GB — my experience

Short answer:

13B+ models, any models in Q8 quantization, and attempts to run

two models simultaneously — guaranteed disappointment on 8 GB.

I tested this on my Mac M1 — so you don't have to.

Everyone who worked with Ollama on 8 GB went through the same

stage: "Maybe 13B will fit after all?" No, it won't.

I checked.

Working with Ollama on a Mac M1 with 8 GB unified memory, I tested

dozens of models of different sizes. Here's an honest list of what

doesn't work — or works so poorly that it's better not to work at all.

❌ 13B models and larger

Llama 2 13B, Qwen 14B, CodeLlama 13B — even in Q4 quantization

they require 8–9 GB just for model weights. Add KV-cache, OS,

and you get a system that continuously swaps to disk.

I tried running Llama 2 13B Q4 — the first 5 minutes it was loading,

then it produced 1–2 tokens per second with constant pauses.

This is unusable for interactive use.

❌ Any 7B model in Q8 quantization

A Q8 version of a 7B model weighs about 8 GB — that's all your RAM.

The OS doesn't magically disappear. I tried Mistral 7B Q8 —

the system froze a minute after startup. Always use Q4_K_M

for 7B models on 8 GB.

❌ Two models simultaneously

Ollama can keep multiple models in memory. On 16 GB, this is convenient —

you switch between models instantly. On 8 GB, it's a recipe for a swap storm.

Keep OLLAMA_MAX_LOADED_MODELS=1 and don't forget

ollama stop before loading another model.

❌ Large context windows (8K+ tokens)

Each doubling of the context window means hundreds of additional megabytes

for the KV-cache. On 8 GB, keep the context to 2048–4096 tokens maximum.

Passing a 10-page document entirely to the model — won't work,

you need to break it into parts.

❌ Mixtral 8x7B (MoE-architecture)

Mixtral activates only 2 out of 8 "experts" for each token,

so theoretically it uses less computation. But all 8 experts

must be in memory — and that's 26+ GB even in Q4.

The name "8x7B" is misleading: it's not a 7B-sized model.

General rule: if ollama run

takes longer than 30 seconds to load and the first response comes after

a minute — the model is too large for your system. Don't wait for it to

"warm up" — close it and choose a smaller model.

Comparison of models by size, quality, and tasks —

in the article Top 10 Ollama models in 2026: which to choose.

Conclusion: I went through this myself — I thought a larger

model would give better results, downloaded 13B, waited a minute for the first

response, and deleted it. Installed 3B — and performance immediately increased.

On 8 GB, the better strategy is to choose a model that works fast and stably,

rather than struggling with one that "almost fits."

🎯 Tests: what to expect in practice

Short answer:

On a Mac M1 8 GB, a 3B model delivers 20–30 tokens/sec, a 7B model — 10–15 tok/s.

On CPU-only Windows — two to three times slower.

Below is a summary table for orientation.

Benchmarks on the internet are often done on a clean system without

other software. In reality — with VS Code open and 5 Chrome tabs —

the numbers will be lower. Therefore, these tests are closer to reality.

Summary performance table

| Model |

RAM |

Mac M1 8 GB |

CPU-only 8 GB |

RTX 4060 8 GB VRAM |

| Gemma 2B (Q4) |

~1.6 GB |

~30 tok/s |

~10 tok/s |

~50+ tok/s |

| Phi-3 Mini 3.8B (Q4) |

~2.3 GB |

~25 tok/s |

~8 tok/s |

~45 tok/s |

| Llama 3.2 3B (Q4) |

~2 GB |

~28 tok/s |

~9 tok/s |

~48 tok/s |

| Qwen 2.5 Coder 3B (Q4) |

~2.2 GB |

~25 tok/s |

~8 tok/s |

~45 tok/s |

| Llama 3.1 8B (Q4) |

~4.5 GB |

~12 tok/s |

~4 tok/s |

~40 tok/s |

| DeepSeek R1 8B (Q4) |

~5 GB |

~10 tok/s |

~3 tok/s |

~35 tok/s |

Data is approximate, based on results from

LocalLLM.in,

SitePoint

and LocalAIMaster.

Actual speed depends on system load, context window size

and background processes.

What do these numbers mean in practice?

- ✔️ 15+ tok/s: comfortable interactive chat — the response appears faster than you can read it

- ✔️ 8–15 tok/s: workable, but a delay is felt on long responses

- ⚠️ 3–6 tok/s: acceptable for one-off tasks (debugging, analysis), frustrating for active chat

- ❌ <3 tok/s: model is too large for this system

Conclusion: For daily work on 8 GB, aim

for 3B models — they deliver 20+ tok/s on Apple Silicon and keep

the system responsive. 7–8B models — for specific tasks, when you're

willing to close everything unnecessary and wait.

❓ Frequently Asked Questions (FAQ)

Can Ollama be run on a laptop with 8 GB RAM?

Yes. Models with 1–3B parameters (Phi-3 Mini, Llama 3.2 3B, Gemma 2B)

work comfortably on any system with 8 GB. Models with 7–8B

work at the limit — you need to close unnecessary programs.

More details — in the

Ollama installation guide.

What is the best model for 8 GB RAM?

Depends on the task. For code — Qwen 2.5 Coder 3B.

For text and chat — Llama 3.2 3B or Phi-3 Mini.

For reasoning and debugging — DeepSeek R1 8B (at the limit of 8 GB).

Full model comparison —

in the article Top 10 Ollama models in 2026.

Is a GPU needed for Ollama?

No, Ollama works on CPU too. But with a GPU (discrete or Apple Silicon)

speed is 3–10 times higher. On a CPU-only system with 8 GB,

stick to 3B models and smaller for comfortable work.

What's better: a 7B model in Q2 or a 3B in Q4?

Almost always — 3B in Q4. Aggressive quantization (Q2) significantly

reduces the quality of responses, especially on complex tasks.

A smaller model with normal quantization will give better results.

Can Ollama on 8 GB replace ChatGPT?

For daily tasks — summarization, simple questions, code generation —

yes. For complex analysis, multimodal tasks, and working with large

context — cloud models are still stronger.

The optimal approach is hybrid:

Ollama for regular tasks, ChatGPT/Claude for complex ones.

More details —

in the article Ollama vs ChatGPT vs Claude: when local AI is better.

How much disk space is needed?

One 3B model in Q4 — approximately 2 GB on disk. Three models for different

tasks — 6–8 GB. Ollama stores models in ~/.ollama,

loaded models can be deleted with the command ollama rm model_name.

Is it worth upgrading to 16 GB?

If you plan to regularly work with local AI — definitely yes.

16 GB opens access to 13–14B models, full-quality 7B in Q8,

and comfortable work with large context windows.

The difference in capabilities between 8 and 16 GB is the largest across the entire spectrum.

✅ Conclusions

8 GB of RAM is not a death sentence for local AI,

but it's a limit that requires conscious decisions. Here's the main takeaway:

- ✔️ 3B models — comfort zone: Phi-3 Mini, Llama 3.2 3B, Gemma 2B work fast and stably, leaving room for IDE and browser

- ✔️ 7–8B models — work zone: DeepSeek R1 8B, Qwen 3 8B work at the limit, but provide significantly better quality for specific tasks

- ✔️ Q4_K_M — the only sensible quantization choice on 8 GB: a smaller model with Q4 is always better than a larger one with Q2

- ✔️ Apple Silicon with 8 GB — the best budget option: unified memory provides an advantage over CPU-only systems

- ✔️ 13B+ models, Q8, two models simultaneously — not worth it: tested, doesn't work

I myself use exactly this approach: I keep several models

for different tasks — one for code, another for text, a separate one for debugging.

Each model has its strong side, and instead of one large one

that might not fit in memory, it's better to have 2–3 specialized light ones.

Switching between them via ollama run — takes seconds.

If you're just starting —

install Ollama using

our guide,

download phi3:mini and try it.

In five minutes, you'll have a working local AI —

without subscriptions, without internet, without transmitting data externally.

And if you need a website, blog, or web application with AI functionality integration

— write to us at WebsCraft,

we'll help you implement it.

📖 Sources