OpenAI released GPT-5.5 just six weeks after GPT-5.4 — and it's not another patch.

Spoiler: the first fully retrained base model since GPT-4.5 offers a real leap in agentic tasks and long context, but hasn't improved on hallucinations — and costs 20% more, not double as it might seem at first glance.

⚡ In Short

- ✅ Terminal-Bench 2.0: 82.7% (+7.6pp): GPT-5.5 leads among public models in agentic coding

- ✅ Long Context: MRCR v2 74% vs 36.6%: biggest leap — quality on 1M tokens has genuinely doubled

- ✅ Effective Surcharge ~20%, not 100%: the model uses ~40% fewer tokens per task

- ⚠️ Hallucinations haven't improved: BullshitBench — 45% pushback, same as GPT-5.4

- 🎯 You will get: a concrete checklist — migrate now or not, with real numbers for each scenario

- 👇 Below are detailed explanations, benchmarks, tables, and a decision checklist

📚 Article Content

What is GPT-5.5 and why was it released

GPT-5.5 (internal codename — "Spud") was released on April 23, 2026 — six weeks after GPT-5.4.

This is OpenAI's first fully retrained base model since GPT-4.5. All releases in between were

primarily tuning and iterations on the existing architecture. GPT-5.5 is not GPT-5.4 with patches.

Positioning within the OpenAI lineup as of April 2026:

| Model |

Purpose |

API Price (input / output per 1M tokens) |

| GPT-5.5 |

Flagship for agentic and complex tasks |

$5 / $30 |

| GPT-5.5 Pro |

Research-grade, maximum accuracy |

$30 / $180 |

| GPT-5.4 |

High-volume, latency-sensitive endpoints |

$2.5 / $15 |

Why release after six weeks? Competitive pressure: Anthropic announced Claude Mythos Preview,

Google is pushing with Gemini 3.1 Pro. But the difference between 5.4 and 5.5 is more significant than between previous updates —

and independent benchmarks confirm this.

Key differences between GPT-5.5 and GPT-5.4

GPT-5.5 tops the Artificial Analysis Intelligence Index

with a score of 60 points — three points ahead of Claude Opus 4.7 and Gemini 3.1 Pro (both at 57).

GPT-5.4 is at the same level of 57. This means GPT-5.5 is the first model to truly break away from the leading group,

not just shuffle within statistical error.

However, the aggregate index is an average. It's more important to understand *where* the difference lies,

and where it's almost non-existent. Let's break it down by area.

Agentic Coding and Terminal Operations

Terminal-Bench 2.0 is the most telling benchmark for developers. It tests not code generation,

but the model's ability to *navigate* a CLI environment: make multi-step decisions,

coordinate tools, handle unexpected errors. GPT-5.5 shows 82.7% compared to

75.1% for GPT-5.4 — a gain of +7.6pp. This is the highest result among publicly available models:

for comparison, Claude Opus 4.7 is at 69.4%, Gemini 3.1 Pro is lower.

What this means in practice: an agent using GPT-5.5 gets "stuck" less often in the middle of a pipeline and more often

completes the task to a real result without further human intervention.

Tool Orchestration

MCP Atlas is a benchmark from Scale AI for multi-step tool orchestration.

GPT-5.5: 75.3%, GPT-5.4: 67.2% (+8.1pp). This is the second largest gain in the table.

Here, context is important: Claude Opus 4.7 scores 79.1% on this same benchmark — meaning Anthropic is still ahead in tool orchestration. GPT-5.5 has caught up, but not surpassed.

Long Context — The Biggest Leap

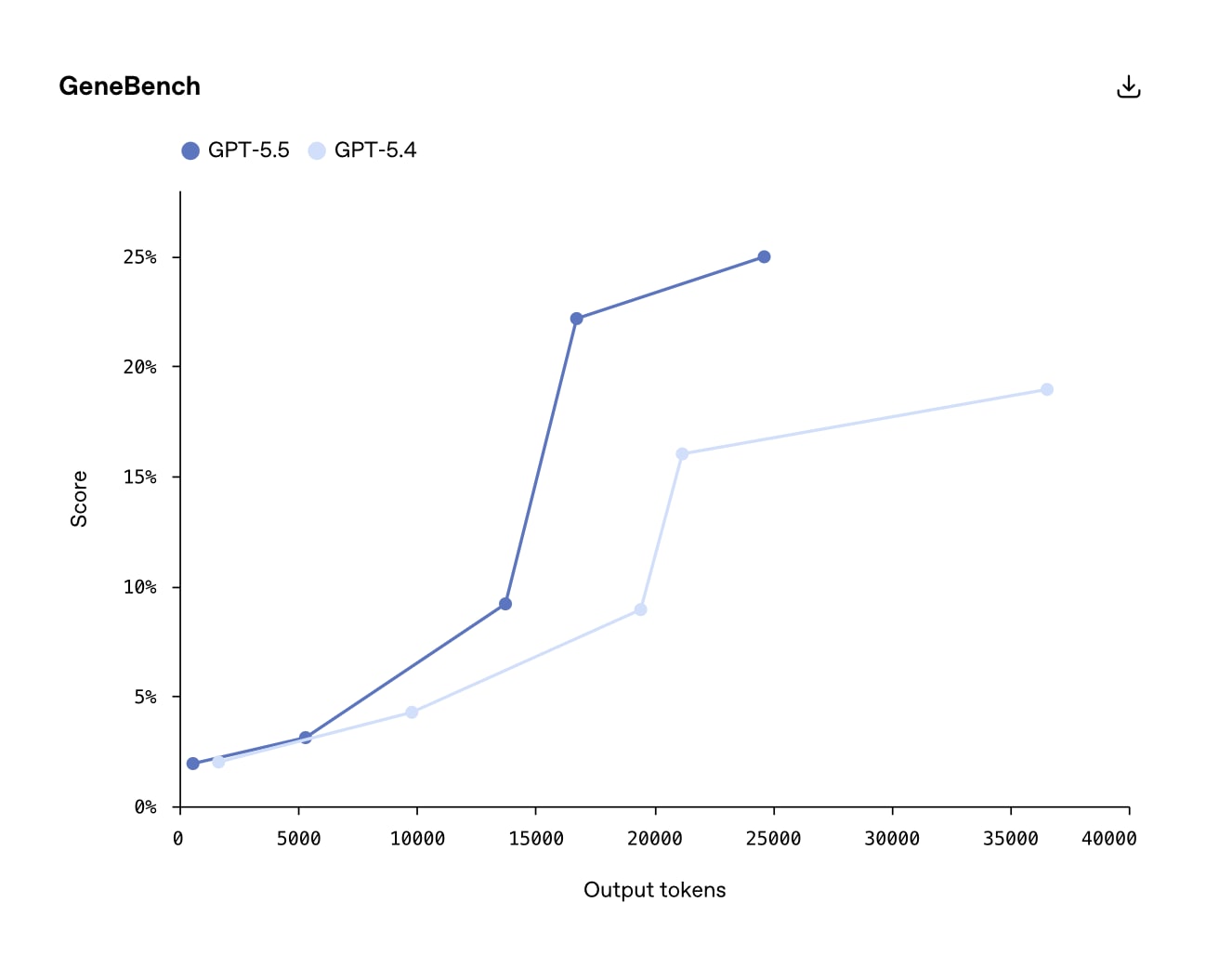

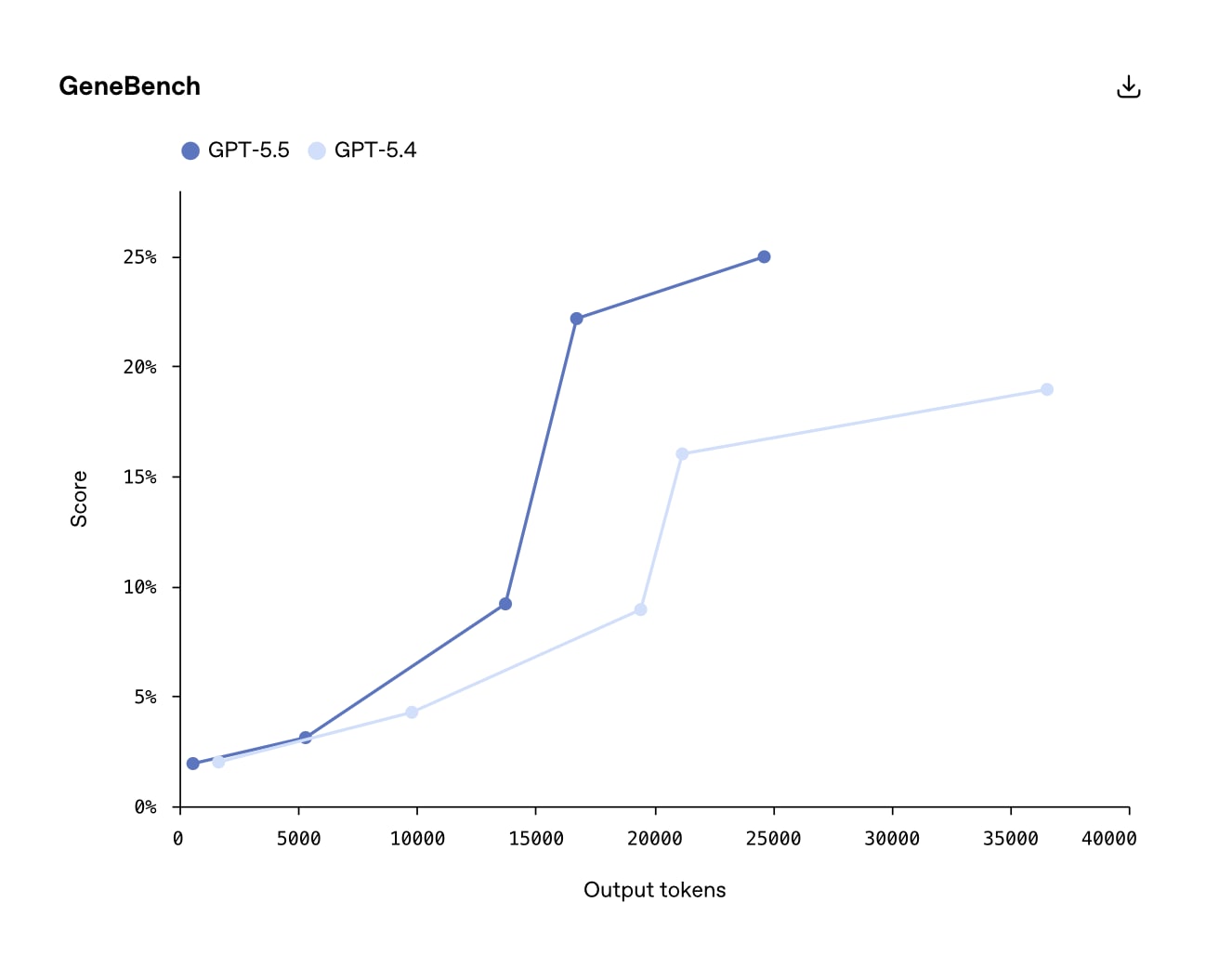

MRCR v2 @ 1M tokens is a benchmark for retrieval and reasoning in very long contexts.

GPT-5.4: 36.6%. GPT-5.5: 74.0%. The gain is +37.4pp, the result more than doubled.

This is fundamentally important. The "1M token window" in GPT-5.4 existed on paper — but the quality of work

with truly long context was weak. GPT-5.5 is the first OpenAI model where a million tokens of context is a truly

*working* capability, not a marketing number.

For developers who submit large codebases or dozens of documents in a single request,

this is the most significant practical change in the release.

Areas with Minimal Gain

SWE-Bench Pro is a benchmark for solving real GitHub issues. GPT-5.4: ~57.7%, GPT-5.5: 58.6% (+0.9pp).

Almost nothing. However, SWE-Bench Pro is the benchmark where all frontier models cluster within

1–2 percentage points of each other. A more significant indicator here is the number of tokens per task,

and GPT-5.5 is consistently more efficient in this regard.

For comparison: Claude Opus 4.7 on SWE-Bench Pro is 64.3%, meaning for tasks like

"fix a specific bug in a real repository," Anthropic is still ahead.

Pure Academic Knowledge Without Tools

HLE (Humanity's Last Exam) without tools — a test of knowledge depth in complex interdisciplinary questions.

GPT-5.5: 41.4%. GPT-5.5 Pro: 43.1%. Claude Opus 4.7: 46.9%. Mythos Preview: 56.8%.

The conclusion is unambiguous: in *pure* academic knowledge without tool access, OpenAI is not leading yet.

If your scenario involves answering complex scientific or interdisciplinary questions without search,

Claude Opus 4.7 or Mythos (for those with access) show better results.

| Benchmark |

What it measures |

GPT-5.4 |

GPT-5.5 |

Δ |

Leader among all models |

| Terminal-Bench 2.0 |

Agentic coding in CLI |

75.1% |

82.7% |

+7.6pp |

GPT-5.5 ✅ |

| MCP Atlas (tool use) |

Tool orchestration |

67.2% |

75.3% |

+8.1pp |

Claude Opus 4.7 (79.1%) |

| MRCR v2 @ 1M tokens |

Long context |

36.6% |

74.0% |

+37.4pp |

GPT-5.5 ✅ |

| ARC-AGI-2 |

Abstract reasoning |

— |

— |

+11.7pp |

GPT-5.5 ✅ |

| SWE-Bench Pro |

Real GitHub issues |

~57.7% |

58.6% |

+0.9pp |

Claude Opus 4.7 (64.3%) |

| HLE without tools |

Academic knowledge |

— |

41.4% |

— |

Mythos Preview (56.8%) |

Latency

Despite significantly greater capabilities, GPT-5.5 matches GPT-5.4 in per-token latency under real-world conditions.

Typically, more powerful models are slower. This did not happen here.

Technically, this was achieved through deep hardware and software integration: the model is served on

NVIDIA GB200 and GB300 NVL72, with custom heuristic algorithms for load balancing across

GPU cores — resulting in a +20% increase in token generation speed. Notably, these algorithms themselves

were partially written with the assistance of GPT-5.5.

Practical consequence: fewer tokens per task + preserved latency = less end-to-end time

for most agentic scenarios compared to GPT-5.4.

Code Quality: has it improved in practice

The short answer: yes, but not everywhere and not equally. GPT-5.5 is not a "better version of GPT-5.4

for any code." It's a model that made a leap in a specific type of task —

and remained at roughly the same level in others. Let's break it down honestly for each area.

Generating New Code

If you expect a dramatic improvement in generating new components from scratch — you will likely

be surprised by the minimal change. On SWE-Bench Pro (real GitHub issues where a correct patch needs to be written),

GPT-5.5 shows 58.6% compared to 57.7% for GPT-5.4. The difference is less than one percentage point.

For context: Claude Opus 4.7 on this benchmark is 64.3%. If your primary scenario is

generating new functions or services based on specifications, and you are already using Claude,

there's no point in switching for generation quality alone.

Refactoring Large Codebases

Here, the difference is real. According to the GitHub Copilot team

(GPT-5.5 appeared there on April 24), the model is strongest in complex multi-step agentic

coding tasks. The key concept is persistence through context.

GPT-5.4 often "forgot" the initial goal after a few steps during large refactorings —

especially if the change affected multiple files. GPT-5.5 better maintains the overall intent and

"pushes" changes through the entire codebase sequentially. This is not due to magic, but a specific

number: MRCR v2 @ 1M tokens — 74% vs 36.6%. The model simply reads what has already been done better

and doesn't lose the thread.

In practice, this looks like:

-

Renaming with cascading changes: GPT-5.5 tracks all usages

and doesn't miss imports or dependencies in adjacent modules.

-

Changing method signatures: the model automatically finds all call sites and adapts them,

rather than stopping after the first file.

-

Migrating between library versions: it keeps the old and new API in mind simultaneously

throughout a multi-step process.

Debugging

One of the most noticeable practical improvements is its behavior with ambiguous failures.

In situations where the cause of an error is not obvious, GPT-5.4 often entered a *retry loop*:

it tried one thing, it didn't work, it tried something similar, it didn't work again — and so on,

consuming tokens and time without results.

GPT-5.5 recognizes earlier that the current approach isn't working and either changes strategy

or explicitly stops and explains why the task cannot be solved with the available information.

This reduces the number of tokens spent on failed attempts — and, consequently, the real cost of debugging sessions.

Testing and Validation

GPT-5.5 can independently run a test after generating code, analyze the result,

and continue working — without user input. If a test fails, the model doesn't return

the code "as is" with an explanation like "the problem is likely here" — it goes further and fixes it.

This is particularly noticeable in Codex, where OpenAI specifically tuned the model for this scenario.

Early access teams have reported savings of up to 10 hours per week

on code review and document perusal — precisely because the model doesn't stop

after the first draft.

Working with Large Files

If you feed a large monolithic file, several related modules, or

dozens of documents into the context simultaneously — GPT-5.5 is genuinely different. MRCR v2 @ 1M tokens shows

an increase from 36.6% to 74.0% — more than double.

Specific example: a Spring Boot service with multiple layers (controller, service, repository,

DTO, config) provided in a single context. GPT-5.4 often "lost" details of the lower layers

when working with the upper ones. GPT-5.5 maintains the entire stack simultaneously and can make consistent

changes without losing context between components.

Limitations: Codex has a maximum of 400K tokens (not 1M). 1M is only available via API.

For most real-world codebases, 400K is sufficient, but if you have a large monolith or

need to submit multiple repositories — consider this limitation.

Errors and Hallucinations — The Honest Picture

Here, GPT-5.5 is not the winner, and it's important not to gloss over this.

According to BullshitBench —

a benchmark that assesses the model's ability to reject nonsensical or irrelevant queries —

GPT-5.5 shows a ~45% pushback rate. Approximately the same as GPT-5.4.

The improvement relative to its predecessor is zero.

GPT-5.5 Pro is worse: ~35% pushback rate. More thinking compute doesn't fix the problem —

on the contrary, the model spends extra time "rationalizing" nonsense instead of rejecting it.

Peter Gostev (AI Capability Lead, Arena.ai) comments: "It seems that at a certain size level,

improvements come from mid/post-training, not more compute."

| Model |

BullshitBench pushback rate |

Assessment |

| Claude (Anthropic models) |

Highest among leaders |

✅ Leader |

| GPT-5.5 |

~45% |

⚠️ No change vs 5.4 |

| GPT-5.4 |

~45% |

⚠️ Baseline |

| GPT-5.5 Pro |

~35% |

❌ Worse than baseline |

Practical conclusion: if hallucination rate is critical in your product —

for example, a RAG assistant for customers, legal, or medical content — GPT-5.5 does not solve

this problem. For such scenarios, Claude Opus 4.7 remains a more reliable choice.

If, however, you are building an agentic pipeline where each step is programmatically verified — the model's hallucination rate

is less critical, and here the advantages of GPT-5.5 outweigh the drawbacks.

Working with Agents and Tools

Agentic work is the main bet of GPT-5.5. OpenAI directly positions the model as a step

towards a "new way of interacting with computers": not prompt → response, but task → autonomous execution.

If you want to understand the mechanics of how models work with tools —

read our article on tool use vs function calling, JSON Schema, and the connection with RAG.

Let's look at what's behind the new release in terms of specific capabilities.

Function calling

Formally, the function calling API in GPT-5.5 has not changed — the same schemas, the same syntax.

The real difference is in the quality of calling decisions.

In complex scenarios, GPT-5.4 often called a tool based on the first superficial match:

there's a `search()` function — I'll call it, even if the task can be solved locally without a query.

GPT-5.5 better assesses whether a tool is needed at all, and if so — which one and with what parameters.

This is confirmed on Tau2-bench telecom — a benchmark for multi-step execution through tools

in a realistic environment (telecommunication scenarios with complex logic).

GPT-5.5 shows a noticeable improvement over 5.4. For comparison: on MCP Atlas

(a broader benchmark for tool orchestration), Claude Opus 4.7 is still ahead — 79.1% versus 75.3%.

This means GPT-5.5 has caught up but not surpassed in this category.

Where this is noticeable in practice:

-

Fewer unnecessary calls: the agent doesn't "ping" an external API every time the response

is in the context — this directly reduces latency and pipeline cost.

-

Correct sequence: if a task requires getting data first,

then transforming it, then saving it — the model independently builds this order

without explicit instructions for each step.

-

Tool error handling: if a call returns an error or an empty result,

GPT-5.5 adapts its strategy instead of just repeating the same call.

Multi-step execution

The main difference from GPT-5.4 is its behavior in ambiguous conditions.

GPT-5.4 effectively required explicit guidance at each step: if the task

was not sufficiently specified — it stopped and asked. If a failure occurred — it stopped or

went into a retry loop. This turned the "agentic" pipeline into a semi-manual process.

GPT-5.5 behaves differently: navigates through ambiguity — determines the most

likely intent and continues, rather than getting blocked. Key characteristics:

-

Plan → execute → verify → continue: the model independently builds an execution plan,

runs steps, verifies the intermediate result, and adjusts course — without user prompts

after each step.

-

Early detection of dead ends: if the current approach doesn't work,

GPT-5.5 recognizes this earlier and either changes strategy or explicitly stops

with an explanation — instead of an infinite retry loop that wastes tokens and time.

-

Maintaining the goal through many steps: in pipelines with 10+ steps,

the model doesn't "forget" the initial task until the end of execution.

Early access teams (Nvidia, OpenAI partners) reported savings of up to 10 hours

per week — mainly on tasks involving reviewing large volumes of documents and

multi-step code review, where GPT-5.4 required manual "pushing" between steps.

Important nuance: persistence is not the same as accuracy. A model that "doesn't stop"

can confidently execute 10 steps in the wrong direction. For production agents,

programmatic verification of intermediate results remains mandatory — GPT-5.5 does not eliminate

this necessity but reduces the number of places where manual intervention is needed.

Orchestration

It's important to distinguish between two types of orchestration here: computer use (working with UI, browsers, applications)

and CLI/API orchestration (terminal, API calls, automation without UI).

GPT-5.5 shows different results in each.

Computer use (OSWorld-Verified): 78.7% versus 78.0% for GPT-5.4 — a difference of 0.7pp,

essentially statistical equality. If you are building an agent that controls a browser or

desktop applications, GPT-5.5 does not provide a significant improvement over its predecessor.

CLI and API orchestration (Terminal-Bench 2.0): 82.7% versus 75.1% — +7.6pp.

This is a fundamentally different picture. Terminal-Bench tests exactly what is important for backend developers:

command-line navigation, multi-step decision-making, coordination between

different CLI tools. For those building agents that automate deployment,

testing, migrations, or Git operations — this is the most relevant benchmark.

| Type of Orchestration |

Benchmark |

GPT-5.4 |

GPT-5.5 |

Δ |

Conclusion |

| Computer use (UI) |

OSWorld-Verified |

78.0% |

78.7% |

+0.7pp |

⚠️ No significant difference |

| CLI / API agents |

Terminal-Bench 2.0 |

75.1% |

82.7% |

+7.6pp |

✅ Real gain |

| Tool orchestration |

MCP Atlas |

67.2% |

75.3% |

+8.1pp |

✅ Gain, but Claude Opus 4.7 is ahead (79.1%) |

Overall conclusion for the section: if you are building an agent for the backend — CI/CD automation,

API operations, coding agent in the terminal — GPT-5.5 provides a real advantage.

If the agent works with a UI or browser — the difference is minimal.

If tool orchestration is a central part of the product — it's worth testing

Claude Opus 4.7 as well, which is still ahead on MCP Atlas.

Performance and Cost

The price of GPT-5.5 is one of the main questions that arises immediately after the announcement.

On paper, it has doubled. In practice — it hasn't. But OpenAI's claim of a "20% actual price increase"

also requires a critical look: the figure is self-reported and not independently confirmed.

Let's break it down.

Sticker Price vs. Real Task Cost

Official API pricing for GPT-5.5: $5 / $30 per 1M input/output tokens.

For comparison, GPT-5.4 is $2.5 / $15. So the per-token price has doubled.

But the per-token price is not the same as the cost of a task. OpenAI claims that GPT-5.5

uses approximately 40% fewer output tokens to complete the same Codex tasks.

This is confirmed by independent analysis from Artificial Analysis, but without access to the scaffold benchmark.

Math for a specific case:

| Model |

Output tokens per task |

Price per 1K output |

Task cost |

Difference |

| GPT-5.4 |

100K |

$0.015 |

$1.50 |

— |

| GPT-5.5 |

60K (−40%) |

$0.030 |

$1.80 |

+$0.30 (+20%) |

The difference is +$0.30 per task, not +$1.50. But there's an important nuance: this calculation

doesn't account for failed tasks. GPT-5.5 exits retry loops earlier on failed attempts —

meaning fewer tokens are spent on tasks that didn't complete successfully.

For teams with a large volume of agent tasks, this could be a significant additional saving that is difficult to measure without real A/B testing on their own data.

Scale: What the Bill Looks Like at High Volumes

| Output tokens/month |

GPT-5.4 (Standard) |

GPT-5.5 (–40% tokens) |

Actual Difference |

| 10M task tokens |

$150 |

$180 (6M × $0.030) |

+$30/mo |

| 100M task tokens |

$1,500 |

$1,800 (60M × $0.030) |

+$300/mo |

| 1B task tokens |

$15,000 |

$18,000 (600M × $0.030) |

+$3,000/mo |

At high volumes, the difference becomes noticeable in absolute terms, even if the percentage is the same.

Before making a decision, it's important to measure the actual number of tokens for a typical task

in your specific pipeline — it can differ significantly from the Codex averages.

Batch and Flex: How to Get Back to GPT-5.4 Pricing

OpenAI has maintained reduced rates for asynchronous workloads:

| Tier |

Input (1M tokens) |

Output (1M tokens) |

Suitable for |

| GPT-5.5 Standard |

$5.00 |

$30.00 |

Real-time API, agents |

| GPT-5.5 Batch / Flex |

$2.50 |

$15.00 |

Offline tasks, eval, backfill |

| GPT-5.5 Priority |

$12.50 |

$75.00 |

Critical real-time tasks |

| GPT-5.4 Standard |

$2.50 |

$15.00 |

Baseline for comparison |

Key takeaway for Batch: GPT-5.5 Batch costs the same as GPT-5.4 Standard.

If you have offline workloads — eval grading, batch summarization, content backfill,

mass classification — you get GPT-5.5 capabilities at GPT-5.4 pricing.

This is the most obvious case for migration.

GPT-5.5 Pro: When the $30/$180 Price is Justified

GPT-5.5 Pro uses the same base model with more parallel test-time compute.

The price is $30 input / $180 output per 1M tokens, which is 6 times more expensive than the base GPT-5.5.

According to Artificial Analysis, at medium compute, GPT-5.5 base achieves results

comparable to Claude Opus 4.7 at maximum effort — and costs ~$1,200 versus ~$4,800 per 1M tokens

for Claude at maximum settings. GPT-5.5 Pro is targeted for:

- Research-grade tasks where quality is more important than price

- Single complex queries (legal analysis, medical diagnostics, scientific calculations)

- Scenarios where the cost of a single error exceeds the cost of the model

Caveat: on BullshitBench, GPT-5.5 Pro shows a ~35% pushback rate — worse than base GPT-5.5 (~45%).

More compute doesn't mean fewer hallucinations. For tasks where response reliability is critical,

the Pro version offers no advantage over the base.

Response Speed

Per-token latency of GPT-5.5 in real-world conditions is identical to GPT-5.4 — despite the significantly higher model complexity.

OpenAI achieved this through hardware-software co-design on NVIDIA GB200/GB300 NVL72.

However, for agent pipelines, the more important metric is time-to-completion of a task, not per-token latency.

Here, GPT-5.5 wins doubly: fewer tokens per task + fewer retry iterations on failures =

shorter time from start to result, even with equal token generation speed.

Main Caution

The "40% fewer tokens" figure is OpenAI's self-report, measured on internal Codex tasks.

The Scaffold benchmark has not been published. Artificial Analysis independently confirmed the trend,

but the specific number depends on the type of tasks.

The rule is simple: perform A/B testing on your real prompts before any migration decision.

Compare not the per-token price, but the number of tokens and the number of successful completions

on the same set of tasks. Your billing dashboard will tell you more than any benchmark.

Where GPT-5.4 is Still Sufficient

Honestly, the first thing I do after any loud OpenAI announcement is look for where the new model

is *not* needed. Marketing always talks about what's gotten better.

It stays silent about where nothing has changed. And for most real products,

that's the most important question.

I use GPT models for summarization and classification at WebsCraft —

and immediately after the GPT-5.5 release, I had no desire to migrate this pipeline.

Here's why.

Summarization and Classification

For tasks like "shorten text," "determine topic," "extract structured fields" —

GPT-5.4 handles them excellently. GPT-5.5 in this scenario offers no noticeable difference

in quality but costs more per token. Yes, considering token efficiency, the real surcharge

is ~20% — but why pay even 20% more for an identical result?

All of GPT-5.5's upside is persistence in complex multi-step tasks and long context.

Summarization is neither of these. It's a single instruction, short input, predictable output.

There's nothing to "persist" here.

Basic RAG on a Fixed Corpus

If you have RAG with pre-prepared chunks, a fixed corpus, and simple

retrieve → generate without complex reasoning — GPT-5.4 and GPT-5.5 will yield practically the same result.

The quality of the response in basic RAG is primarily determined by the quality of retrieval and chunking,

not by which model is at the end of the pipeline.

It's a different story with multi-hop RAG, where the model has to independently decide what and how to search,

make multiple queries, and reconcile conflicting sources. That's where GPT-5.5 can make a difference.

But that's no longer "basic RAG."

Content Generation

I write articles for WebsCraft myself — and I don't intend to delegate this entirely to a model,

regardless of version. But even when talking about assistive scenarios: generating

a draft, expanding on theses, rephrasing — GPT-5.4 handles them just as well.

SWE-Bench Pro shows a +0.9pp difference between 5.4 and 5.5. For text content, the difference is likely

even smaller and subjective.

Separately: I don't believe OpenAI's claims that GPT-5.5 is "significantly more natural and less

sycophantic" compared to its predecessor. This has been said about every new model since GPT-4o.

Test it yourself with your own prompts.

High-Volume Batches with Fixed Structure

If the model always performs one simple action — for example, always returns JSON

with three fields based on the input text — then the capability overhead of GPT-5.5

is simply not utilized. You are paying for the potential of autonomous multi-step reasoning,

which your pipeline does not use.

For such tasks, the formula is simple: GPT-5.4 is cheaper → quality is identical → no reason to migrate.

And if you really want GPT-5.5 — use the Batch tariff ($2.50/$15),

which puts it at the standard price of GPT-5.4.

Latency-Sensitive Real-Time Endpoints

Per-token latency in GPT-5.5 is identical to GPT-5.4 — OpenAI confirms this, and it's technically supported

(NVIDIA GB200/GB300, custom balancing algorithms). But if you have an endpoint where time-to-first-token is critical and you've already optimized the prompt for minimal output —

there will be no real gain from 5.5. But the risk of unexpectedly changed model behavior

after migration is always present.

My approach: don't change what works without a measurable reason.

"A new model has been released" is not a measurable reason.

When a switch to GPT-5.5 is justified

In the previous section, I discussed scenarios where GPT-5.4 remains sufficient.

However, there are situations where the difference between 5.4 and 5.5 is not just marketing hype but a real, measurable improvement.

Skepticism towards OpenAI's announcements doesn't mean denying facts. If benchmarks show

a +37pp increase in long context performance, it cannot be ignored. Let's break down where the transition is justified

and why.

Complex Agentic Pipelines (5+ steps)

This is the primary use case for GPT-5.5 — and the only one where I would switch without much hesitation.

If your agent performs more than five steps autonomously, encounters failures mid-execution, and needs to independently decide how to proceed, GPT-5.4 systematically falls short here.

The issue with GPT-5.4 in such pipelines isn't that it's "dumber." The problem lies in its behavior under uncertainty: it would stop, ask for clarification, or enter a retry loop. GPT-5.5 navigates ambiguity by making assumptions about intent, continuing execution, and verifying results. Terminal-Bench 2.0 shows:

82.7% versus 75.1%. This is a +7.6pp improvement on tasks where this specific behavior is measured.

Practically speaking: if you have a CI/CD agent that autonomously runs tests, analyzes error logs, makes corrections, and retries, GPT-5.5 will successfully complete more of these cycles without manual intervention. The same applies to a code review agent that examines multiple files, tracks dependencies, and generates a consolidated report.

An important caveat, which I've already mentioned: persistence does not equal accuracy.

An agent that "doesn't stop" might confidently execute 10 steps in the wrong direction.

Programmatic verification of intermediate results is essential, regardless of the model.

GPT-5.5 reduces the number of points requiring manual intervention but does not eliminate

the need to check the output.

Large Codebases (>200K tokens of context)

MRCR v2 @ 1M tokens shows 74.0% versus 36.6%. The result more than doubled.

This is the most significant quantitative leap across all GPT-5.5 benchmarks and directly

affects developers working with large codebases.

Consider a specific scenario: you input several related modules into the context simultaneously —

a controller, service, repository, config, and tests. At volumes exceeding 100–150K tokens, GPT-5.4 begins to "lose" details from lower layers when working with upper ones. GPT-5.5 maintains the entire stack and makes consistent changes without losing context.

Where this might not work: Codex has a limit of 400K tokens, not 1M. Through the API, it's 1M.

If your monolith exceeds 400K and you want to input it entirely, you'll need direct API access, not Codex. For most real-world codebases, 400K is sufficient, but this is something to verify in advance, not after migration begins.

CLI Agents and Terminal Automation

Here, I am deliberately separating two scenarios that OpenAI's marketing has conflated into one "computer use."

Terminal and API Automation (CLI Agents): Terminal-Bench 2.0 shows a +7.6pp improvement.

This is a real gain. If you are building an agent for deployment, Git operations, working with bash scripts, or invoking CLI tools, GPT-5.5 is significantly more reliable.

Computer Use (interacting with UI, browsers, desktop applications): OSWorld-Verified shows

78.7% versus 78.0%. A difference of 0.7pp — essentially statistical parity.

If your agent controls a browser or a desktop application, there's no point in switching solely for

computer use. GPT-5.5 does not offer a real improvement over 5.4 in this area.

Enterprise: Financial, Legal, Analytical Tasks

GDPval is an OpenAI benchmark for knowledge-intensive work that a junior analyst might perform:

financial modeling, legal document analysis, research tasks.

GPT-5.5 achieves 84.9% on this benchmark.

However, there's a nuance I cannot ignore: GDPval is an internal OpenAI benchmark.

There is no independent verification of its methodology. I wouldn't build a business case for migration

solely on this figure. Instead, test it on a real set of your team's tasks.

Where GPT-5.5 truly excels in enterprise is in tasks requiring the simultaneous handling of

multiple large documents (a contract plus appendices plus correspondence), performing cross-analysis,

and providing a balanced response. This is precisely the scenario where the +37pp in long context

translates into a tangible difference in quality.

Multi-Document Research

Analysts, researchers, editors — anyone who regularly works with dozens of sources

simultaneously. With a large corpus, GPT-5.4 would start "forgetting" earlier documents

as it reached later ones. GPT-5.5 does not, as confirmed by MRCR v2.

Consider a specific case: a comparative analysis of 20+ technical articles where you need to track

contradictory statements and draw a balanced conclusion. Or preparing a market overview based on 15 reports from analytical firms. GPT-5.5 keeps all sources in mind —

GPT-5.4 degrades at this volume.

Where I would switch myself — and where I wouldn't

To summarize my personal view: if WebsCraft were to introduce an agent pipeline with 5+ steps

or a need to analyze large document corpora simultaneously, I would test GPT-5.5.

For current summarization and classification tasks, I would not hesitate to stick with GPT-5.4.

The general rule: a transition is justified when your task *structurally* requires

what GPT-5.5 does better — autonomous persistence or long context.

If a task can be solved in a single step or with a short prompt, there's no point in switching,

regardless of how good the announcement sounds.

Summary: Is it worth migrating now

After every major AI release, two camps emerge in the community: those who migrate

on day one, and those who wait "until things stabilize." Both approaches are flawed.

The first is driven by FOMO rather than informed decisions. The second is procrastination disguised as caution.

There's only one correct approach: determine if your specific tasks structurally benefit

from what GPT-5.5 does better. Not from what's written in the announcement. From what

is confirmed by independent benchmarks and your own A/B testing.

Below is my checklist. Not OpenAI's, not TechCrunch's. Mine, considering what I've verified, what raises skepticism, and where the numbers are truly convincing.

| Condition |

Decision |

Reason |

| Agent pipeline with 5+ steps of autonomous execution |

✅ Migrate |

Terminal-Bench 2.0 +7.6pp is not marketing; it's a measurable difference in persistence during failures. |

| Regularly inputting >200K tokens of context |

✅ Migrate |

MRCR v2: 74% vs 36.6% — the result has doubled. This is the biggest leap in the release. |

| Batch processing of large volumes offline |

✅ Migrate to Batch tier |

GPT-5.5 Batch = $2.50/$15 — same price as GPT-5.4 Standard. Risk-free. |

| Multi-document research with 10+ sources simultaneously |

✅ Test |

Long context is 5.5's main advantage. But test on your own documents, not benchmarks. |

| CLI agent: deployment, Git, bash automation |

✅ Test |

Terminal-Bench confirms a real difference. Perform A/B testing on your scripts. |

| Summarization, classification, extraction in large volumes |

❌ Do not migrate |

Quality is identical to GPT-5.4, price is higher. No reason to switch. |

| Basic RAG on a fixed corpus |

❌ Do not migrate |

RAG quality is determined by retrieval and chunking, not the model at the end of the pipeline. |

| Computer use: agent controls browser or UI |

❌ Do not migrate |

OSWorld-Verified: +0.7pp — statistical parity with GPT-5.4. |

| Hallucination rate is critical for the product |

⚠️ Caution |

BullshitBench: GPT-5.5 ≈ GPT-5.4 (~45%). Pro is worse (~35%). Claude leads here. |

| Latency-sensitive real-time endpoint |

⚠️ Test first |

Per-token latency is the same, but model behavior after migration can change unexpectedly. |

| API needed right now |

✅ Available |

As of April 24, 2026 — Responses and Chat Completions API are open. |

How to properly conduct A/B testing before migration

"Do A/B testing" is advice everyone gives, but few explain what exactly to measure.

Here's a minimal set of metrics that make sense:

-

Number of output tokens per typical task: if GPT-5.5 truly uses

40% fewer, your actual bill will increase by less than double. If not, you'll see it immediately

on your billing dashboard, not in marketing materials.

-

Task completion rate: for agent tasks — how many completed successfully

without manual intervention. This is the main metric where GPT-5.5 should show a real difference.

-

Number of retry iterations per task: if the agent "gets stuck" less often —

this is visible in logs. Compare the average number of steps to successful completion.

-

Output quality on your evaluation set: not on OpenAI's benchmarks,

but on real examples from your product. 20–30 representative tasks are sufficient

for an initial conclusion.

My personal verdict

GPT-5.5 is the first OpenAI release in a long time where I see a real technical reason

for migration in specific scenarios. Not "it got a little better everywhere," but

"it made a leap in a narrow niche." This is a more honest position than previous releases.

However, "a real reason for migration in specific scenarios" is not the same

as "migrate everyone right now." If your tasks don't fall into the top rows

of the checklist above, wait. Not because "you need to wait for it to stabilize,"

but because overpaying for capabilities you don't use is simply irrational.

And finally: whatever the model, it's your responsibility to test it on your own data.

I test it. I advise you to do the same.

❓ Frequently Asked Questions (FAQ)

Does GPT-5.5 completely replace GPT-5.4?

No — and this is fundamentally important to understand before making any migration decisions.

GPT-5.5 surpasses GPT-5.4 in specific niches: agent pipelines with 5+ steps,

long context exceeding 200K tokens, and CLI automation. For everything else —

summarization, classification, basic RAG, content generation — GPT-5.4 remains

a sufficient and cheaper option. A full replacement will occur when GPT-5.5's price

drops or when GPT-5.4 is retired. Currently, they are two different tools

for different tasks.

Is GPT-5.5 really twice as expensive as GPT-5.4?

The per-token price has doubled: $2.5/$15 → $5/$30 per 1M input/output tokens. But the cost

per task is not. OpenAI claims that GPT-5.5 uses ~40% fewer output tokens

for the same Codex tasks. If this is true, the actual additional cost is around 20%, not 100%.

Artificial Analysis confirmed the trend independently, but the exact figure depends on the task type.

Check your billing dashboard, not marketing materials.

The Batch tier ($2.50/$15) puts GPT-5.5 at the same standard price as GPT-5.4 —

for offline workloads, this is the easiest way to test without overspending risk.

Is GPT-5.5 available via API?

Yes, as of April 24, 2026 — via the Responses and Chat Completions API.

At launch on April 23, the API was closed: OpenAI explained this was due to the need for

additional safeguards for cybersecurity and bio-risks, which require a different approach

than ChatGPT. The model is now available standardly. Batch and Flex pricing are also active —

at half the standard rate.

What is GPT-5.5 Pro and who needs it?

GPT-5.5 Pro is the same base model but with more parallel test-time compute.

It costs $30/$180 per 1M tokens — six times more expensive than the base GPT-5.5.

It's positioned for research-grade and high-stakes tasks: scientific calculations, medical diagnostics,

complex legal analysis. But there's an important nuance: on BullshitBench, GPT-5.5 Pro

shows a ~35% pushback rate — worse than the base GPT-5.5 (~45%). More compute

doesn't mean fewer hallucinations. If response reliability is critical for you,

Pro doesn't solve this problem.

Does GPT-5.5 support a 1M token context?

Yes — and this is the first time "1M tokens" at OpenAI means a truly working capability,

not a marketing figure. MRCR v2 @ 1M tokens: GPT-5.5 — 74%, GPT-5.4 — 36.6%.

The result more than doubled. But there are limitations: Codex has a maximum of 400K tokens,

not 1M. The full million is only available via direct API. For most real

codebases, 400K is sufficient — but if you have a large monolith or multiple repositories

simultaneously, calculate in advance.

Is GPT-5.5 expected in the free ChatGPT tier?

As of publication, GPT-5.5 is only available to paid subscribers: Plus, Pro,

Business, and Enterprise in ChatGPT; Plus and above in Codex. The free tier has not received access.

OpenAI has not announced timelines for expanding access — if this is critical for you,

focus on OpenAI's official model page, not rumors.

Is GPT-5.5 better than Claude Opus 4.7?

It depends on the task — and this isn't a diplomatic answer, it's a fact.

GPT-5.5 leads on Terminal-Bench 2.0 (82.7% vs 69.4%) and long context (MRCR v2).

Claude Opus 4.7 leads on SWE-Bench Pro (64.3% vs 58.6%), MCP Atlas (79.1% vs 75.3%),

and BullshitBench (fewer hallucinations). On HLE without tools, it's also ahead (46.9% vs 41.4%).

If you're building a CLI agent or working with large context, use GPT-5.5.

If response reliability or tool orchestration is critical, it's worth testing Claude alongside.

✅ Conclusions

GPT-5.5 is the first OpenAI release in a long time where I see a real technical reason

for migration. Not "it got a little better everywhere," but a specific leap in a narrow niche.

This is a more honest position than previous releases — and precisely why it deserves serious consideration,

not dismissal as just another hype cycle.

However, "a real reason for migration in a narrow niche" is not the same as "migrate everyone right now."

Below is what I've extracted from this analysis as a practical summary.

-

🏆 The biggest win — long context: MRCR v2 +37.4pp — the result has doubled.

If you regularly input over 200K tokens in a single request, this is the sole argument sufficient to test GPT-5.5.

-

🤖 Agentic coding — real, not marketing: Terminal-Bench 2.0 +7.6pp

confirmed independently. Persistence during failures, fewer retry loops, higher task completion rate

on complex pipelines. If your agent performs 5+ autonomous steps, test it.

-

⚠️ Hallucinations haven't improved — and this shouldn't be ignored:

BullshitBench: GPT-5.5 ≈ GPT-5.4 (~45% pushback). Pro is worse (~35%).

Claude models still lead here. If response reliability is critical, GPT-5.5

does not solve this problem.

-

💰 Actual additional cost ~20%, not 100%: but only if your tasks

structurally benefit from GPT-5.5's token efficiency. For summarization and classification,

there's no saving, only an added cost. The Batch tier ($2.50/$15) completely negates

the difference for offline workloads.

-

❌ Where definitely not to migrate: summarization, classification, basic RAG,

content generation, computer use via UI. Here, GPT-5.4 is cheaper and provides identical results.

-

🔬 Rule #1 — unchanged: A/B test on your own real prompts and tool calls

before making any decision. Measure output tokens, task completion rate, and number

of retry iterations — not per-token price or OpenAI's announcement benchmarks.

AI models are being released more and more frequently. Six weeks between GPT-5.4 and GPT-5.5

is a new pace, and it won't slow down. Reacting to every release with a migration is

not a strategy; it's operational chaos. Reacting with ignorance is also not a strategy;

it's missing out on real advantages where they exist.

The only viable approach: know your tasks better than the marketers know their model.

Then, each subsequent release is not a cause for alarm or hype, but simply a checklist with two

columns: "I benefit" and "I don't benefit." I've tested GPT-5.5. You should test it yourself.