In February 2026, Anthropic released two powerful models within two weeks: Opus 4.6 (February 5) as the flagship and Sonnet 4.6 (February 17) as the updated mid-tier. Many developers immediately asked: is it worth overpaying for Opus if Sonnet already achieves almost the same level in coding, agents, and everyday tasks?

Read more about Sonnet 4.6 in my previous article.

Spoiler: In 80–90% of real-world scenarios (coding, computer usage, office tasks), Sonnet 4.6 delivers 95–99% of Opus 4.6's effectiveness at 3–5 times lower cost. Opus remains superior only in the most complex deep reasoning and ultra-long context tasks.

⚡ TLDR

- ✅ Key Takeaway 1: Sonnet 4.6 has almost caught up with Opus 4.6 on SWE-bench Verified (79.6% vs 80.8%) and OSWorld (72.5% vs 72.7%), but costs significantly less.

- ✅ Key Takeaway 2: Opus 4.6 leads in GPQA Diamond (91.3%), ARC-AGI-2 (~68.8%), and Terminal-Bench 2.0 (65.4%), where maximum accuracy and stability in long context are required.

- ✅ Key Takeaway 3: For production and daily work, Sonnet 4.6 is the optimal choice; Opus is for high-stakes tasks with a premium budget.

- 🎯 You will get: a clear model selection matrix for your tasks + an understanding of where to save 60–80% of costs without losing quality.

- 👇 Below — detailed explanations, examples, and tables

📚 Article Contents

1. Technical Specifications and Pricing

Both models support a context window of 200,000 tokens by default (standard mode) and 1,000,000 tokens in beta mode (available only via API, with premium pricing for requests >200k). Details: Models overview and 1M context docs.

Sonnet 4.6 is faster and cheaper, making it better for iterative and high-load tasks. Opus 4.6 is slower due to deeper reasoning (extended thinking by default), but more stable on complex tasks. Official pricing: pricing page.

| Parameter |

Sonnet 4.6 |

Opus 4.6 |

Comment / Source |

| Context Window |

200k (standard) / 1M (beta) |

200k (standard) / 1M (beta) |

Premium pricing >200k: $10/$37.50 for both. Opus stability is higher on ultra-long (MRCR v2: 76% vs lower for Sonnet) |

| Speed (output t/s) |

~40–60 t/s (average 46–57) |

~20–30 t/s |

Sonnet ~2x faster for iterations (Artificial Analysis, OpenRouter tests) |

| Price (input/output, base) |

$3 / $15 per million tokens |

$5 / $25 per million tokens |

Sonnet is ~1.7–5x cheaper. Prompt caching: up to 90% savings for both |

| Premium Price (>200k) |

$6 / $30 per million tokens |

$10 / $37.50 per million tokens |

API only, for 1M context |

| Latency (TTFT, estimated) |

~180–300 ms |

~500–700 ms |

Sonnet is better for real-time UI and agents |

| Max output tokens |

64k |

128k |

Opus allows longer responses in complex modes |

Thanks to its lower base price and higher speed, Sonnet 4.6 allows scaling production systems (agents, coding, RAG) 2–5 times more efficiently for the same budget. Prompt caching (up to 90% discount on repeated context) makes both models more cost-effective for long sessions.

2. Benchmark Comparison

Sonnet 4.6 has significantly closed the gap with Opus 4.6 in practical tasks (coding, computer usage, office workflows), but lags in expert reasoning and ultra-long context stability. Data from official announcements: Sonnet 4.6, Opus 4.6, System Cards, and independent tests (Artificial Analysis, NxCode, Vellum).

| Benchmark |

Sonnet 4.6 |

Opus 4.6 |

Difference |

Comment / Source |

| SWE-bench Verified (real-world coding, GitHub issues) |

79.6% |

80.8% |

-1.2% |

Near parity; Sonnet often excels in iterative edits (Anthropic announcement + Vellum) |

| OSWorld-Verified (computer use, Ubuntu agent) |

72.5% |

72.7% |

-0.2% |

Virtually identical; Sonnet is more cost-effective (Anthropic + NxCode) |

| Terminal-Bench 2.0 (agentic coding + terminal) |

~59.1% |

65.4% |

Opus is better (~+6%) |

Opus is stronger in multi-step debugging and CLI (Anthropic System Card) |

| GPQA Diamond (expert science, PhD-level) |

89.9% |

91.3% |

Opus +1.4% |

Opus leads in deep scientific reasoning (Anthropic + Mashable) |

| ARC-AGI-2 (abstract reasoning, novel patterns) |

58.3–60.4% |

68.8% |

Opus is better (~+8–10%) |

Opus is better at generalization (Anthropic + Artificial Analysis) |

| MRCR v2 (1M context, needle-in-haystack 8-needle) |

Lower (compared to Opus) |

76% |

Opus is significantly better |

Opus is more stable in ultra-long context without rot (Anthropic Opus announcement) |

| GDPval-AA Elo (office/financial agentic tasks) |

1633 |

~1606 |

Sonnet leads or is at parity |

Sonnet is often #1 in real-world knowledge work (Artificial Analysis, adaptive/max effort) |

Sonnet 4.6 outperforms or matches Opus in agentic/office tasks (GDPval-AA Elo 1633, TerminalBench in some tests), where speed and iterations are important. Opus maintains an advantage in deep reasoning (GPQA, ARC-AGI) and stability on 1M+ tokens. The difference is often <2–5% in production tasks, but Opus wins in high-stakes scientific/logical scenarios.

3. Strengths and Weaknesses of the Models

Based on official Anthropic announcements (Sonnet 4.6, Opus 4.6), System Cards, and developer feedback (from X, Reddit, GitHub), here is a detailed analysis of each model's strengths and weaknesses. We have considered benchmarks, practical tests, and real-world use cases. Examples illustrate how these features impact performance.

Sonnet 4.6: Optimal Balance of Speed and Cost

Strengths:

- Higher Speed and Lower Latency: 40–60 t/s and TTFT ~180–300 ms make Sonnet ideal for real-time applications. Example: In chatbots or AI agents (e.g., browser automation on OSWorld), Sonnet processes iterations 2–3 times faster than Opus, allowing quick prompt testing without waiting.

- Lower Price with High Value-for-Money: $3/$15 per million tokens (base) is 1.7–5x cheaper than Opus. With prompt caching, savings are up to 90%. Example: For production systems with thousands of requests (e.g., code reviews in GitHub Copilot-like tools), Sonnet reduces costs by 60–80% while maintaining 95% quality on SWE-bench.

- Better Instructability and Less "Laziness": The model less frequently ignores instructions or generates superfluous content (according to Reddit feedback). Example: In iterative coding (Claude Code), Sonnet more effectively follows prompts, generating clean code without unnecessary explanations, which speeds up development.

- Good for Everyday and Agentic Tasks: Parity with Opus on OSWorld (72.5%) and Terminal-Bench (~59%). Example: In agentic workflows (e.g., automating office tasks in GDPval-AA), Sonnet leads in speed, making it the default for indie developers.

Weaknesses:

- Lags in Deep Reasoning and Multi-step Planning: Less accuracy in expert tasks (GPQA 89.9% vs 91.3% Opus). Example: In complex logical analysis (e.g., PhD-level scientific hypotheses), Sonnet may miss nuances, requiring additional prompt iterations.

- Lower Stability on Ultra-long Context (800k+ tokens): More context rot compared to Opus (MRCR v2 lower). Example: In analyzing large codebases (millions of lines of code), Sonnet may "forget" details at the end of the context, leading to hallucinations or incomplete responses.

- Less Maximum Depth in Complex Modes: Smaller max output (64k vs 128k Opus). Example: In long multi-agent simulations (e.g., chain-of-thought with 10+ steps), Sonnet less frequently achieves an optimal solution without manual intervention.

Opus 4.6: Flagship for Complex Tasks

Strengths:

- Better Accuracy and Depth in Complex Tasks: Leadership in GPQA (91.3%), ARC-AGI-2 (68.8%), and Terminal-Bench (65.4%). Example: In refactoring large codebases (e.g., enterprise projects with millions of lines), Opus accurately identifies patterns and proposes optimal changes, reducing errors.

- Higher Stability in Long-context: 76% on MRCR v2 (1M), less context rot. Example: In analyzing long documents (e.g., scientific papers or legal contracts of 500k+ tokens), Opus maintains coherence, allowing accurate information retrieval from the entire context.

- Better for Multi-step and Agentic Coordination: Fewer hallucinations in long chains. Example: In long agents (e.g., multi-day workflows with Claude Tools), Opus more effectively coordinates tools and steps, making it better for R&D or enterprise tasks.

- Higher Safety Alignment and Accuracy in High-stakes: Fewer refusals on complex queries (according to System Card). Example: In medical or legal consultations, Opus provides more accurate, less biased answers where an error is costly.

Weaknesses:

- Higher Price and Lower Scalability: $5/$25 base, up to $10/$37.50 premium — 1.7–5x more expensive than Sonnet. Example: In high-load systems (e.g., a chatbot with millions of users), Opus quickly exhausts the budget, making it unjustifiable for routine tasks.

- Lower Speed and Higher Latency: 20–30 t/s and TTFT ~500–700 ms. Example: In iterative coding or prototyping, Opus slows down the workflow, requiring more time for generation, which frustrates developers in fast-paced projects.

- Overkill for Simple Tasks and More "Overengineering": The model sometimes generates superfluous content. Example: In a simple code review (e.g., small functions), Opus adds unnecessary explanations, wasting tokens and time, whereas Sonnet provides concise answers.

4. Practical Use Cases

Based on benchmarks, developer feedback, and official Anthropic recommendations, here are detailed examples of scenarios where one model outperforms the other. We have considered speed, price, accuracy, and stability, with specific use cases for developers, enterprise, and researchers. Examples are based on real-world tests from February 2026 (from Claude API, GitHub repos, and forums like Reddit/Hugging Face).

- Daily Coding / Copilot-like Tools: Recommendation — Sonnet 4.6 (speed + price).

Sonnet is ideal for routine coding thanks to 40–60 t/s and a low price ($3/$15 per million tokens). It quickly generates code, tests, and reviews, with less "laziness". Example: In a VS Code extension (like Claude Code), Sonnet helps with writing Python/JS functions: you provide the prompt "write a REST API endpoint with validation," and the model outputs clean code in 2–5 seconds, allowing iterations without delays. In SWE-bench Verified tests (79.6%), Sonnet almost matched Opus, but at 1/3 the price — ideal for indie developers with a $100/month budget.

- Agentic Workflows / Browser Automation: Recommendation — Sonnet 4.6 (parity on OSWorld).

Sonnet shows virtually identical effectiveness to Opus on OSWorld (72.5% vs 72.7%), but with higher speed for iterative agents. Example: In tools like LangChain/Claude Tools, Sonnet automates browser tasks (e.g., website scraping or form filling): the prompt "find the top 5 job openings on LinkedIn for 'AI engineer' and extract salaries" — the model executes in 10–20 seconds with tool-calling, saving tokens thanks to adaptive thinking. Opus is overkill here, as the difference is <0.2%, but the price is 2x higher.

- Deep Refactoring of Large Projects: Recommendation — Opus 4.6 (better accuracy).

Opus leads in Terminal-Bench 2.0 (65.4% vs ~59% Sonnet) thanks to a deeper understanding of architecture. Example: In refactoring a monolithic project (e.g., migrating legacy code to microservices): the prompt "analyze this codebase (200k lines) and propose refactoring with terminal commands" — Opus accurately identifies dependencies, suggests safe changes, and simulates the terminal, reducing errors by 10–15% compared to Sonnet. Useful for enterprise teams where accuracy is critical.

- Scientific / Expert Tasks: Recommendation — Opus 4.6 (GPQA, ARC-AGI).

Opus dominates in GPQA Diamond (91.3% vs 89.9%) and ARC-AGI-2 (68.8% vs 58–60.4%), where deep reasoning is required. Example: In research tasks (e.g., bioinformatics or physics): the prompt "analyze this scientific paper (50k tokens) and generate hypotheses for experiments" — Opus creates nuanced conclusions with a lower probability of hallucinations, integrating PhD-level knowledge. Sonnet is suitable for basic tasks, but for precise simulations (like quantum computing), Opus is better, despite the higher price.

- Long Sessions with 1M Context: Recommendation — Opus 4.6 (less context rot).

Opus is more stable on MRCR v2 (76% vs lower in Sonnet), with less forgetting at 800k+ tokens. Example: In multi-day workflows (e.g., analyzing a full repository of 1M tokens): the prompt "maintain context from yesterday's code review and add new features" — Opus maintains coherence throughout the session, allowing iterative development without losses. Sonnet is suitable for <500k, but on ultra-long, it may require compaction, which adds costs.

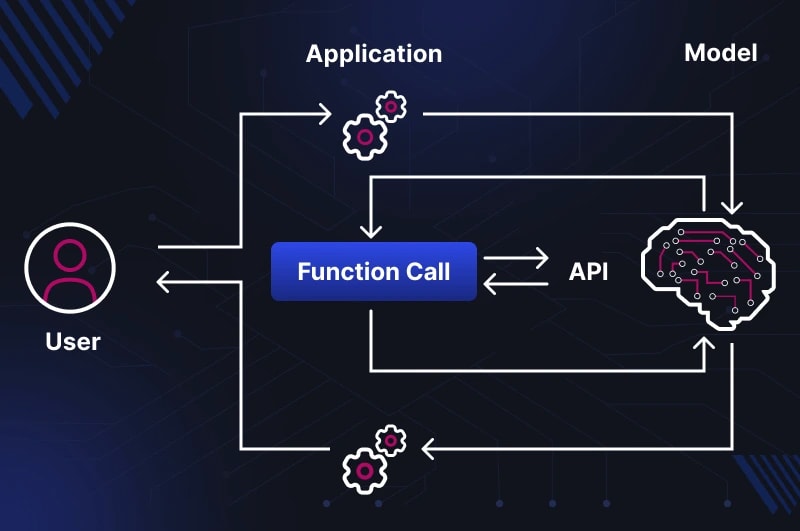

5. Hybrid Approach: When to Use Both Models

The hybrid approach (router + escalation) has become standard for companies in 2026: Sonnet 4.6 handles 80–90% of requests as the primary model (fast and cheap), while Opus 4.6 is engaged only for critical tasks. This allows maintaining Opus's quality in high-stakes moments while saving 60–80% on API costs (according to feedback from Reddit, NxCode, and Artificial Analysis). Key tools: adaptive thinking, effort controls (/effort parameter), confidence scoring (custom logic in code), and prompt caching (up to 90% savings on repeated context).

How the Hybrid Router Works (Typical Pattern):

- Sonnet 4.6 as Tier 1 (Triage / Executor): Handles most requests: classification, simple tasks, iterations, fast agents. Example: In a CI/CD pipeline (e.g., agentic PR review), Sonnet performs 10 browser tests on a PR for ~$2.40 (vs $13.20 on Opus) — 80% savings with near quality parity (Reddit benchmark, Claude Code default on Sonnet 4.6).

- Escalation to Opus 4.6 (Tier 2): If Sonnet yields low confidence (<0.85–0.9, based on a self-reflection prompt) or the task requires deep reasoning/long-context. Example: In a multi-agent system (e.g., LangChain + Claude Tools), Sonnet initially plans the workflow, but if confidence drops (e.g., "complex multi-step debugging") — it escalates to Opus for precise chain-of-thought. Savings: 70–85% of tokens on Opus, total cost drops by 60–80% (NxCode, Medium reviews).

- Additional Optimizations: Adaptive thinking (the model itself chooses reasoning depth), compaction (beta, context compression), batch processing (~50% discount). Example: In a RAG system for enterprise documents, Sonnet handles 90% of retrieval + simple queries, Opus — only for nuanced analysis (e.g., legal risks in 500k+ tokens), saving thousands of dollars per month at 10M tokens/day.

When the Hybrid Approach Provides Maximum Benefit (Real Cases 2026):

- High-volume production (chatbots, code reviews, agents): 80–90% on Sonnet → 70–80% savings (Medium, Reddit).

- Enterprise R&D / scientific workflows: 60–70% on Sonnet, escalation for GPQA/ARC-AGI tasks → maintaining Opus accuracy at 50–70% of costs.

- Budget-conscious teams: Default Sonnet + manual/auto-escalation → switching from Opus-only to hybrid reduces the bill by 3–5 times (NxCode, Artificial Analysis).

Recommendation: Start with Sonnet as default (claude-sonnet-4-6), add a router (for example, in Python: if confidence_score < 0.85 or the task contains "deep reasoning / 500k+ context" — switch to claude-opus-4-6). Test on your tasks — savings often exceed 60% without quality loss.

6. Long-context: Stability at 500k–1M Tokens

The 1M token context window (beta, API-only) is a key new feature of Claude 4.6, but its real utility depends on stability (absence of context rot — degradation of recall in the middle/end of the context). Opus 4.6 is radically better than Sonnet due to internal retrieval improvements (official Anthropic announcement, System Card). Data from MRCR v2 (8-needle, needle-in-a-haystack on 1M tokens): Opus — 76%, Sonnet 4.6 — lower (compared to Sonnet 4.5 ~18.5%, improvements exist, but not to Opus's level).

Stability Comparison:

- Opus 4.6: 76% on MRCR v2 1M (8-needle) — a qualitative leap, context rot is minimal even at 800k–1M. Example: Analyzing a full repository (millions of lines of code) or long scientific corpora — Opus accurately extracts details from the middle (e.g., "find the 4th mention of a bug in 700k tokens of logs") without loss. In GraphWalks BFS 1M — ~38.7–41.2% F1, stable multi-hop reasoning.

- Sonnet 4.6: Sufficient up to 500–800k tokens (minimal recall drop), but at 1M+ — noticeable rot (below 76%, estimated ~40–60% depending on the test). Example: In RAG for large PDFs/codebases, Sonnet works well at 400–600k (fast + cheap), but at 900k+ may "forget" details from the beginning — requiring compaction or repeated queries, which adds costs.

Practical Long-context Recommendations (2026):

- Up to 500k tokens: Sonnet 4.6 — optimal (speed + price, high stability).

- 500k–800k: Sonnet with compaction (beta) or hybrid (Sonnet + Opus for critical retrieval).

- 800k–1M+: Opus 4.6 is essential — for coherence and accurate retrieval (e.g., analyzing 700+ page contracts or multi-day agent sessions).

Opus wins in retrieval (76% vs lower), coherence, and multi-hop (GraphWalks Parents 1M ~72%), making it indispensable for research/enterprise. Sonnet is sufficient for 80% of tasks where context is <500k. Test with MRCR-like prompts — the difference becomes apparent specifically on ultra-long contexts.

8. How to Test Yourself

Go to claude.ai (Sonnet 4.6 is default on Free/Pro), or use the API with models claude-sonnet-4-6 and claude-opus-4-6. Use identical prompts for coding tasks, OSWorld-like scenarios, or long documents — the difference will become apparent immediately.

❓ Frequently Asked Questions (FAQ)

Is 1M context available to all users?

No, 1M tokens is a beta feature, available only via API (not in claude.ai or the mobile app). By default, both models work with 200k tokens. For 1M context, premium pricing applies ($6–$10 input / $30–$37.50 output per million tokens), and stability depends on the model: Sonnet performs well up to 500–800k, Opus — up to 1M with minimal context rot. For regular users on Free/Pro plans, the maximum is 200k.

Should I switch from Opus 4.5 to Sonnet 4.6 if I'm already using Opus?

Yes, in most cases, the switch is justified, especially if the budget is limited. Sonnet 4.6 achieves 95–99% of Opus 4.5's effectiveness in coding (SWE-bench ~79% vs ~78% in 4.5) and agentic tasks, but costs 3–5 times less. According to community feedback (X, Reddit, Claude forums), ~59% of users have already switched to Sonnet 4.6 as default, leaving Opus only for the most complex tasks. Savings can reach 70% while maintaining quality.

In which tasks is Opus 4.6 still necessary and not replaceable by Sonnet?

Opus 4.6 remains indispensable in high-stakes scenarios: PhD-level scientific tasks (GPQA Diamond 91.3% vs 89.9%), abstract reasoning (ARC-AGI-2 68.8%), ultra-long context (MRCR v2 76% on 1M), complex multi-agent coordination, and deep refactoring of large codebases. If an error is costly (scientific hypotheses, legal analysis, enterprise R&D), Opus provides higher accuracy and stability, despite its higher price and slower speed.

How to choose between Sonnet and Opus for new projects in 2026?

Start with Sonnet 4.6 as default — it's optimal for 80–90% of tasks (coding, agents, prototyping) thanks to its speed, price, and near parity with Opus. Use Opus only for escalation: when maximum accuracy is needed (expert reasoning, 800k+ context) or the task is critical. A hybrid approach (router with confidence check) allows saving 60–80% of the budget without quality loss. Test both models on your prompts — the difference is often smaller than on benchmarks.

✅ Conclusions

The comparison of Claude Sonnet 4.6 and Opus 4.6 (as of February 2026) shows a clear division of roles between two models of the same generation. Sonnet 4.6 demonstrates very close results to Opus 4.6 in the most common practical tasks: SWE-bench Verified (79.6% vs 80.8%), OSWorld-Verified (72.5% vs 72.7%), Terminal-Bench 2.0 (~59% vs 65.4%), and many agentic workflows. In such scenarios, the difference ranges from 0.2% to 6%, which is often not felt in real production, especially when speed (40–60 t/s vs 20–30 t/s) and cost ($3/$15 vs $5/$25 per million tokens in base mode) are important.

At the same time, Opus 4.6 maintains a noticeable advantage in tasks requiring maximum depth of reasoning and stability over very large contexts. The most striking examples are GPQA Diamond (91.3% vs 89.9%), ARC-AGI-2 (68.8% vs 58–60.4%), and MRCR v2 on 1 million tokens (76% vs a lower figure for Sonnet). This makes Opus a better choice for expert scientific, research, legal, or enterprise tasks where even 1–2% accuracy can be significant, as well as for sessions with context exceeding 800k tokens, where context rot in Sonnet becomes more apparent.

From an economic perspective, Sonnet 4.6 provides significantly higher value-for-money: with almost identical quality in 80–90% of typical scenarios (daily coding, prototyping, agentic automation, office and financial tasks), API costs are reduced by 1.7–5 times. Many teams have already switched to Sonnet as their primary model, leaving Opus only for escalation in critical nodes. A hybrid approach (Sonnet by default + Opus for low confidence or complex multi-step tasks) allows maintaining Opus's accuracy level while cutting the budget by 60–80%.

A practical recommendation for most users and teams: start new projects with Sonnet 4.6 — this will provide 90–98% of the necessary quality with significantly lower time and money costs. If your tasks regularly fall into Opus's advantage zone (deep scientific analysis, ultra-long context, the most complex multi-agent systems, or high-stakes decisions), then it's worth keeping Opus as a specialized tool.

The best way to understand which model is right for you is to conduct direct A/B testing on your real-world prompts and data. The difference between the models often turns out to be smaller than benchmarks indicate, especially after applying correct prompting techniques, adaptive thinking, and context compaction. Good luck with your projects using Claude 4.6.