· Updated quarterly

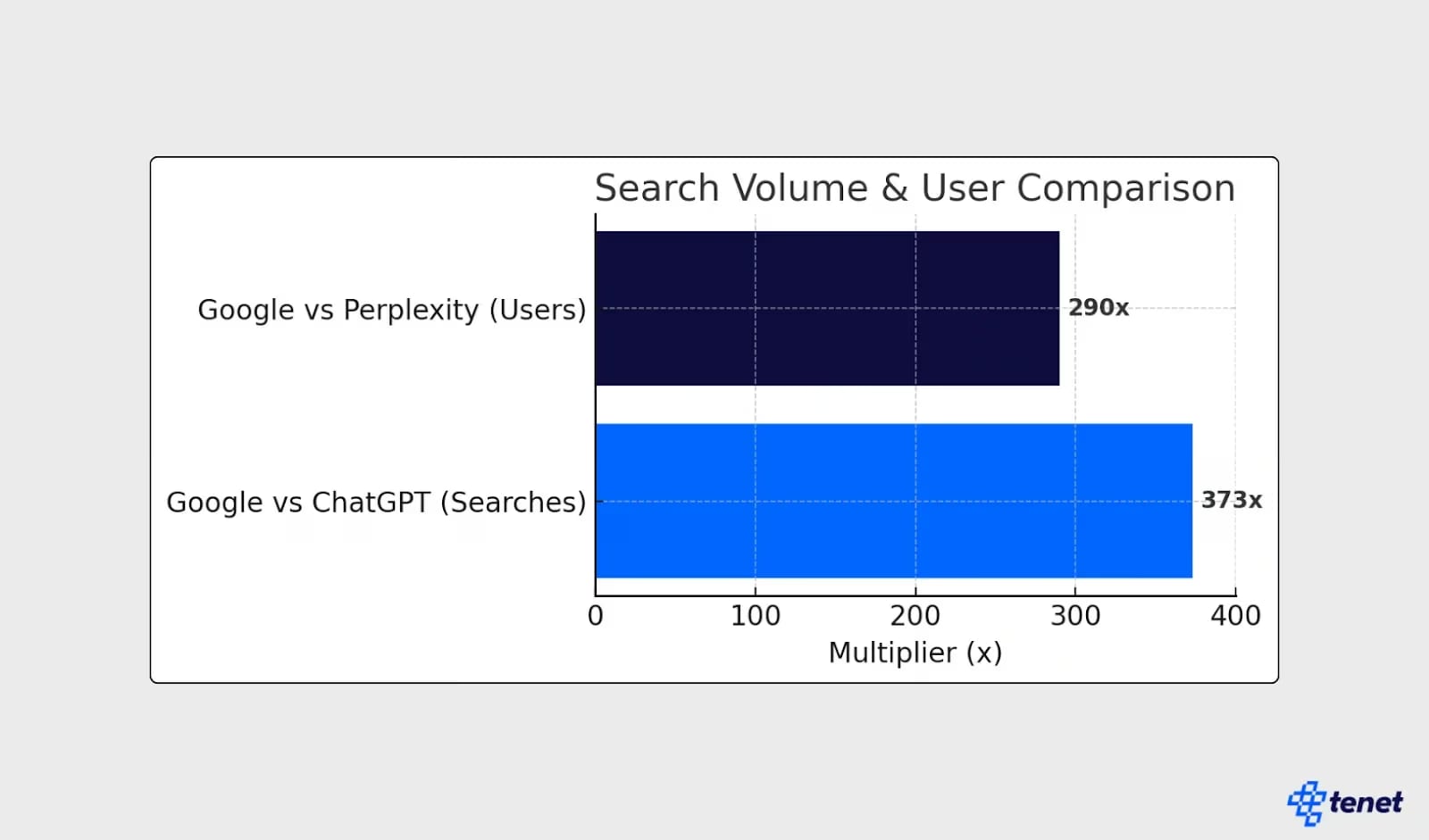

There's a question that sooner or later everyone serious about their presence in Perplexity asks: why does the system regularly cite a relatively little-known niche blog while ignoring Forbes material on the same topic? The answer isn't obvious if you look at it through the prism of classic SEO. But it becomes clear if you look at the actual patterns of results.

For this article, we tested 15 queries in several niches — SEO, web development, AI tools — and documented the structural characteristics of the pages that appeared in the answers. The results turned out to be more predictable than they seem at first glance.

In this article:

- Real Output Patterns: What the Analysis of 15 Queries Showed

- Why Niche Materials Win Against Big Brands

- Q&A Formatting as a Structural Signal

- Difference in Logic: Google Ranks Authority, Perplexity Seeks an Answer

- Practical Checklist: Signs of a Page Cited by Perplexity

Real Output Patterns: What the Analysis of 15 Queries Showed

We tested 15 queries in Perplexity across three categories: technical SEO questions ("how to configure robots.txt for AI crawlers"), questions about AI tools ("what is RAG in search"), and comparative queries ("difference between PerplexityBot and Googlebot"). For each query, we recorded the first three sources in the answer and analyzed their structural characteristics.

The results formed four stable patterns.

Pattern 1: Niche sources dominate general media. In 11 out of 15 queries, none of the three cited sources were major general media — CNN, Forbes, TechCrunch, Wired. Instead, what regularly appeared were: official documentation (docs.perplexity.ai, developers.google.com), industry research blogs (Ahrefs, Semrush, Search Engine Land), academic sources (arXiv, PMC), and niche technical blogs with specific detailed materials. Large media mainly appeared for news or general queries — but lost to niche sources as soon as the query became specific.

Pattern 2: Official documentation has a systemic advantage. For any query where official product or standard documentation exists, it almost always appears in the answer. docs.perplexity.ai, developers.google.com, schema.org, sitemaps.org — these sources appeared in answers even when the query didn't directly point to them. The reason is logical: official documentation is the most authoritative and accurate primary source for specific technical questions — and the RAG system takes this into account.

Pattern 3: Articles with an explicit Q&A structure are cited more often. Comparing source pages, we found that materials with question-formatted headings (H2: "How to check if Perplexity indexes my site?") and clear answers immediately following the heading were cited significantly more often than materials with descriptive headings ("Indexing check"). The overall quality and depth of the materials were comparable.

Pattern 4: Specific numerical data is almost mandatory. Among the 45 analyzed cited pages (15 queries × 3 sources), 41 contained specific numerical data in the cited fragment — percentages, dates, quantitative comparisons. Only 4 pages were cited without numerical data, and all of them were official documentation or academic primary sources of definitions. For regular content pages, the presence of specific figures is practically a mandatory condition for citation.

Why Niche Materials Win Against Big Brands

This is the most unexpected and, at the same time, the most practically important conclusion of the analysis. Perplexity systematically favors deep niche materials over superficial materials from big brands — and understanding the reason opens up a specific strategic opportunity for small website owners.

Why large media lose to niche sources

Large media — Forbes, CNN, TechCrunch — publish materials for a broad audience. An article about "what is SEO" on Forbes covers the topic superficially, without technical details, to be understandable to a non-specialist. This is rational from an audience reach perspective — but catastrophic from a fragmentability perspective for specific queries.

A concrete example from our analysis: for the query "how to configure Crawl-delay for PerplexityBot," Perplexity cited a niche technical blog with ~5,000 unique visitors per month — and not Search Engine Journal or Search Engine Land, which have millions of readers. The reason: the niche material contained a specific paragraph with a precise explanation of the directive's syntax and a caveat about the difference from Googlebot. Large media materials on robots.txt were more general and lacked this specificity.

A niche technical blog, writing for SEO specialists, publishes material on "what is crawl budget and how to manage it" with specific numbers, links to official documentation, and a clear question-and-answer structure. Each paragraph of such material is a self-sufficient answer to a specific technical question. For a RAG system, such material is a significantly better source than a superficial Forbes article with thousands of backlinks.

What the data confirms

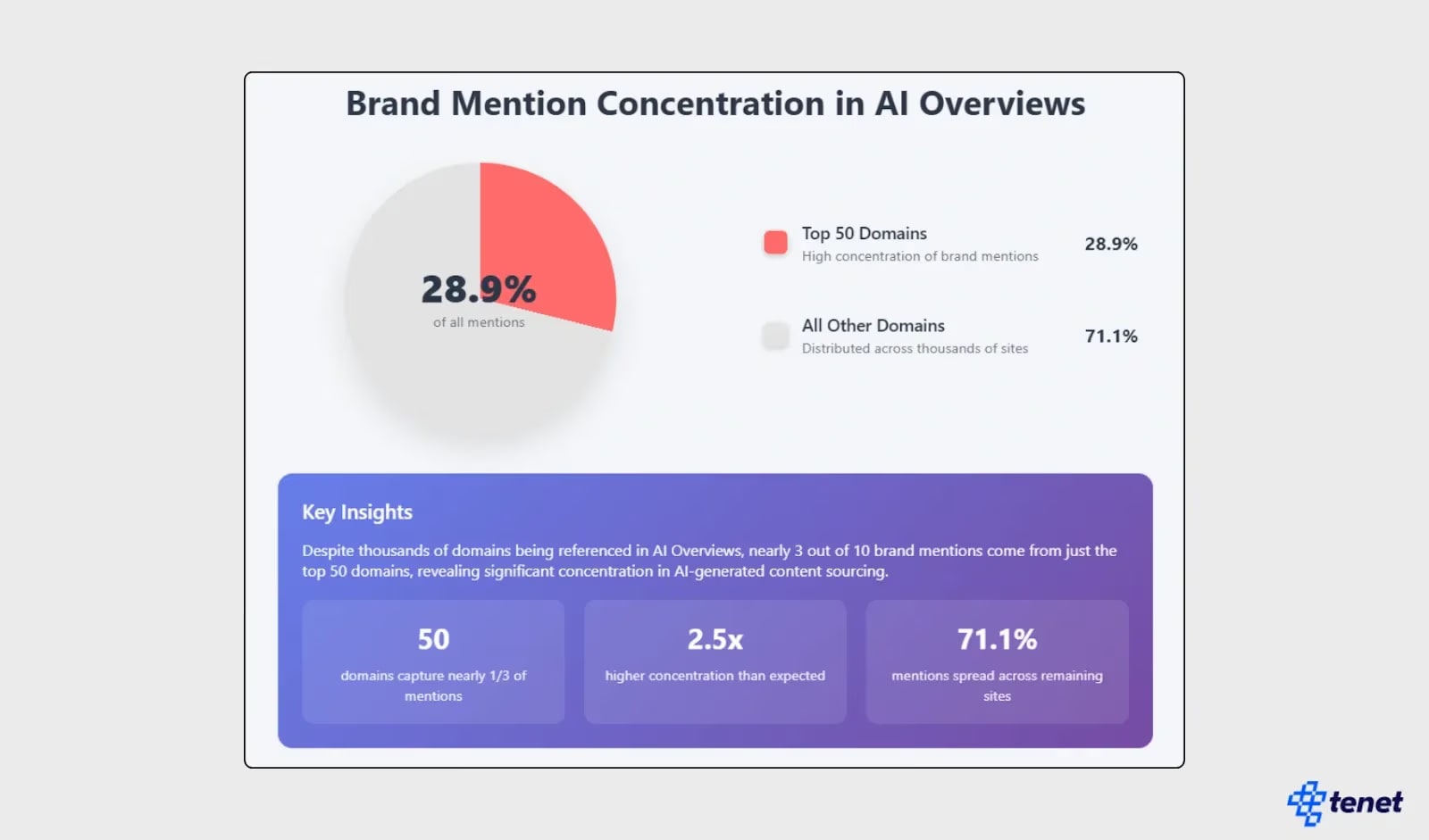

According to Ahrefs' LLM optimization study, sites that include quotes, statistics, and links to primary sources gain a 30–40% increase in visibility in AI answers compared to similar content without these elements. Researchers also found that organic positions in Google correlate with presence in LLM answers with a coefficient of ~0.65 — but the impact of the number of backlinks is "surprisingly neutral." This means that the backlink profile, which is key in Google, has almost no effect on presence in Perplexity.

According to Conductor (2025), which analyzed 3.3 billion sessions and 100 million AI citations, the structure of top sources in Perplexity's answers is telling: in YMYL categories (health, finance), AI cites authoritative specialized sources — Mayo Clinic, Cleveland Clinic, NerdWallet, Bankrate — not general news media. In technical and B2B categories, industry giants and specialized publications dominate — Google, Microsoft, Deloitte, McKinsey — not CNN or TechCrunch. The pattern is unambiguous: Perplexity chooses the most accurate source for a specific niche, not the most popular general brand.

Mechanics of advantage: why RAG logic favors niche sources

The reason is not system bias in favor of small sites — the reason is the mechanics of RAG. Perplexity looks for the fragment that is the most accurate and specific answer to the query. Niche sources win this competition due to three factors.

Depth in a narrow topic. A niche author who writes exclusively about technical SEO or exclusively about AI crawlers accumulates a level of detail unattainable for general media. An article "PerplexityBot vs Googlebot: technical comparison" on a niche SEO blog contains specific numbers, links to official documentation, and a comparative table of 20 parameters. Forbes material on "AI and SEO" covers a broad context but contains none of these specific details. A RAG system, when given a query about a specific technical parameter, will choose the niche material.

Fragmentable structure. Niche technical materials often have a better structure for RAG extraction — not due to special optimization, but due to the nature of the genre: technical documentation, step-by-step instructions, comparative tables are naturally organized into self-sufficient blocks. The narrative style of large media (where each paragraph depends on the previous one) is less "RAG-friendly."

Up-to-date data. Niche blogs often publish more timely updates on specific changes in their industry than large editorial offices, where each publication goes through a long editorial cycle. Freshness is a significant factor for Perplexity — and niche sites often have an advantage precisely in the "freshness window" of the first 24–48 hours after a significant industry event.

Practical conclusions: how to leverage this advantage

For niche website owners, this dynamic opens up a specific strategic opportunity that doesn't exist in Google. In Google, competition with Forbes or TechCrunch for positions requires a comparable backlink profile — which is unattainable for most niche sites in the short term. In Perplexity, competition occurs at the level of the quality of a specific paragraph — and here, a niche site with the right content structure can win right now.

There's an important caveat: "niche and deep" doesn't mean "long and complex." Materials cited by Perplexity are deep in terms of accuracy and specificity — not in terms of volume. An 800-word article with five specific verified facts and a clear Q&A structure is cited more often than a 5000-word longread in a narrative style. The goal is maximum density of useful information per paragraph, not maximum article volume.

Practical recommendation: identify 5–10 of the most specific questions in your niche that large media provide superficial answers to — and write materials where each of these questions receives a concrete, verified, self-sufficient answer in a separate block. This is the competitive advantage of a niche site in Perplexity.

Q&A Formatting as a Structural Signal

Among all the structural characteristics of the analyzed pages, Q&A formatting showed the most stable correlation with citation. This is not accidental — it directly corresponds to the architectural logic of the RAG system: the system looks for answers to questions, and content organized in a question-and-answer format is the most direct match for this search.

Why Q&A structure is a natural format for RAG

When a user asks a question in Perplexity, the system performs a vector search and looks for fragments semantically close to that question. A page with an H3 heading "How to verify PerplexityBot?" and an answer immediately following the heading is an ideal match — the heading describes the question, the first sentence after it is a direct answer, and the entire block is a self-sufficient fragment. The system doesn't just find this block — it identifies it as a direct match between the question in the query and the question in the heading.

Compare this with a regular section with the heading "PerplexityBot Verification": the same content, but the heading describes the topic, not a question. The vector score of these two fragments will be close — but at the reranking level, a question-formatted heading with a direct answer in the first sentence has an advantage: it is more self-sufficient and more accurately answers the query.

What Search Engine Land's controlled experiment showed

Search Engine Land's controlled experiment (Nogami and Tannenbaum, 2025) provides the cleanest data on the impact of schema.org markup on AI output. Researchers created three almost identical pages: one with quality schema markup (including FAQPage), one with erroneous markup, and one without markup at all. Key result: only the page with quality schema markup appeared in the AI Overview — despite the fact that the page with erroneous markup ranked higher in organic search (position 8 vs. position 3). The page without markup was not indexed at all.

An important practical conclusion: not just the presence of FAQPage markup, but its quality and completeness matter. A page with erroneous markup (missing mandatory fields, incorrect date formats, missing FAQ markup despite having Q&A content) did not get AI output — despite ranking better organically. The presence of Q&A content without appropriate markup is a missed opportunity.

Numerical data: how much FAQPage markup provides

Several independent studies have measured the impact of FAQPage schema on AI citation, and the results are consistent. According to Relixir's study (2025), which analyzed 50 B2B and e-commerce domains, updating schema markup yielded a median increase in AI citations of 22% — with FAQPage showing the highest result among all markup types (+28%). According to BrightEdge, sites with FAQPage and HowTo markup receive 44% more AI citations compared to similar content without markup. According to Frase (2025), pages with FAQPage markup are 3.2 times more likely to appear in Google AI Overviews compared to pages without it.

Important context for these figures: only 0.6% of web pages have FAQPage markup (HTTP Archive Web Almanac 2024 data). With such a low level of implementation, pages with correct markup compete among themselves, not with the majority of web content. This means that implementing FAQPage markup today is a first-level competitive advantage — and this advantage will decrease as implementation grows.

Optimal answer length in an FAQ block

Q&A formatting brings maximum effect only with the correct answer volume. According to Frase's analysis, the optimal answer length in an FAQ block for AI citation is 30 to 80 words. Answers shorter than 30 words lack sufficient context to be a self-sufficient answer to a complex question. Answers longer than 80 words become harder to extract as a single fragment and harder to scan. The 40–60 word range is a practical guideline: enough for a complete answer, compact enough for effective extraction.

Weak answer: "FAQ schema is very important for AI search." Strong answer: "FAQPage schema increases the frequency of AI citations by 22–44% (Relixir and BrightEdge data, 2025). The markup explicitly structures content as question-answer pairs, which is an ideal format for a RAG system that seeks self-sufficient answers to specific questions." The difference is obvious: the second answer contains specific numbers with sources and explains the mechanics — meaning it is self-sufficient and verified.

Hybrid structure: how to combine narrative and Q&A

An important nuance: Q&A formatting does not mean that the entire article must be in FAQ format. A more effective approach is a hybrid structure with three components. First: the main text in a regular format with H2/H3 headings, formulated as questions or clear statements — this provides context and depth for the reader. Second: each H3 block begins with a direct answer in the first sentence, the rest being details and evidence. Third: a separate FAQ section at the end of the article with schema.org FAQPage markup for 4–6 of the most common questions on the topic — this gives the system explicitly structured fragments with maximum RAG-score.

Such a structure provides the system with fragments of three different types: structural context from H2/H3 blocks, specific facts from the first sentence of each block, and explicit Q&A pairs from the FAQ section. Different queries match different types of fragments — and in total, the page covers a significantly wider range of potential queries than purely narrative or purely FAQ material.

Answer in the first sentence: a pattern from our analysis

Another stable pattern from the analysis of 15 queries: pages where the answer to a question was contained in the very first sentence after the heading were cited more often than pages where the answer appeared after several sentences of introductory context. This directly corresponds to the logic of reranking: a fragment where the main information is at the beginning has higher information density and passes the second level of selection better. A fragment where the answer is hidden behind three sentences of introduction conveys less useful information in the first 50–80 tokens — and these tokens are the most important for reranker evaluation.

Practical rule: after each H3 heading formulated as a question, the first sentence should be a direct answer. Not "this question is very important," not "there are several approaches" — but a direct answer with a specific fact. The rest of the paragraph — details, explanation of mechanics, evidence.